The Pragmatic Engineer

Why Behavioral Interviews Decide Most Hires: Lessons from ~1,000 Amazon Interview Loops

Gergely Orosz & Steve Huynh (ex-Principal Engineer, Amazon)

Apr 21, 2026

Why Behavioral Interviews Decide Most Hires: Lessons from ~1,000 Amazon Interview Loops

Source: The Pragmatic Engineer · Authors: Gergely Orosz & Steve Huynh (ex-Principal Engineer, Amazon) · Date: Apr 21, 2026 · Original article

Steve Huynh spent 17 years at Amazon and conducted nearly 1,000 interviews — about 600 of them as a Bar Raiser (an Amazon-specific role: an interviewer from outside the hiring team, with veto power, whose job is to ensure every new hire is better than at least 50% of existing employees in that role). After leaving, he spent two years writing Technical Behavioral Interview: An Insider's Guide. This piece is a guest post by Steve, plus an excerpt from Chapter 2 of the book.

The core message: technical interviews are evolving rapidly because of AI, but behavioral interviews still decide most offers — and most candidates massively under-prepare for them. As Steve's own Bar Raiser trainer put it: "Technical skills are the ante. They get you into the game. But they're not what wins you the hand."

Part 1 — Three learnings from ~1,000 interviews

Learning #1: You're over-prepared for one interview and under-prepared for the other

The average candidate spends ~95% of prep time on technical work and ~5% (sometimes literally 0%) on everything else. Steve gets why: technical prep feels concrete. Grind LeetCode → measurable progress. Study system design → you feel sharper. Clear input/output relationship.

And technical rounds are forgiving: even on a problem you've never seen, a competent engineer can reason out loud, take hints from the interviewer, and apply fundamentals. You don't need to have memorized the exact question.

Behavioral rounds are the opposite. When an interviewer asks "Tell me about a time something went wrong on a project and how you handled it," there is no hint they can give you, no fundamentals to fall back on, no reasoning your way through in real time. You either have a prepared story, or you produce word salad while the interviewer watches.

Steve says he saw this play out hundreds of times: a candidate crushes the coding round, then on a "tell me about a difficult decision" question they pick a half-remembered example, ramble, backtrack to add missing context, lose the question, and end with "…so yeah, it worked out in the end." Strong coder, didn't matter. Debrief feedback was always a version of: "I couldn't get a concrete answer about their experience. Every story was vague." No offer.

The frustrating part: behavioral prep takes a fraction of the time technical prep does. The marginal returns flip:

- Hour 80 of LeetCode gives you almost nothing you didn't already have at hour 60.

- Hour 1–10 of story prep can completely change the outcome of your behavioral rounds, because almost nobody does it at all.

Fix: Don't slash technical prep. Just reallocate 10 hours out of an 80-hour prep budget to behavioral. That single weekend may be the highest-leverage investment in your whole interview cycle.

Learning #2: How you deliver the story matters as much as the story itself

You can have a genuinely impressive accomplishment and waste it with bad delivery. Steve calls the most common failure mode the "ramble and stumble": the candidate starts talking and you can't tell if they're figuring out the story as they go or have simply never said these words out loud before. Five minutes of context, three backtracks, and by the time they reach the outcome, you've lost the thread of how you got there.

Steve's analogy: if you had a big presentation at work, you'd structure it, rehearse it, do dry runs with a colleague — nobody walks into a presentation and wings it. Yet in a job interview, where the stakes are arguably higher than any single presentation, people walk in having never said their stories out loud, never timed them, never heard how they sound.

He pushes back on the common worry that rehearsing is "fake" or "inauthentic":

You wouldn't expect to be good at golf without practicing at the driving range. You wouldn't expect to give a great keynote the first time you stepped on stage. Nobody calls a musician fake for rehearsing before a concert.

The "real you" isn't the version that stumbles through unrehearsed answers under pressure. The real you is the one who can clearly communicate what you've done and what you're capable of.

Fix — a concrete drill: Start with the two questions that come up in virtually every interview: "Tell me about yourself" and "Why do you want to work here?" Write down your answers. Record yourself delivering them. Watch the recording. Where did you ramble? Where did you fill space with "um"/"like"? Did you look nervous? Repeat until you watch it back and think "that sounds like someone I'd want to work with." Then do the same for your career stories. Steve claims 30 minutes of this beats 20 hours of coding exercises for interview performance.

Learning #3: The interview is an audition, not an exam

Most candidates think the interview is a test with right answers. It isn't. The interviewer doesn't have a rubric of "correct" responses. They're forming an impression of you as a person — much more nuanced than right/wrong.

By the time you're in the final round, your résumé has already shown the company you can probably code or design at the required level. The final round is also asking a different question: Would we want this person on our team? Would we trust their judgment in a crisis? Would they make our software better or worse?

The key insight Steve learned at Amazon: the coding questions test problem-solving in an artificial environment — nobody actually writes algorithms on a whiteboard during their workday. But the behavioral questions test situations the team handles every single day: navigating disagreement, projects going sideways, influencing without authority, making tradeoffs with incomplete information. Those weren't hypotheticals; that was Tuesday.

So when Steve asked "tell me about a time you pushed back on a stakeholder," he wasn't grading the answer — he was picturing this person in the next prioritization meeting. Every answer was a preview of working with them.

The candidates who treated it as a test tried to figure out what the interviewer wanted to hear and then said exactly that — polished, no rough edges, perfect endings, every decision retroactively correct. Steve would walk out thinking "I have no idea what it would actually be like to work with this person," and across multiple interviewers in the debrief, that uncertainty almost always became a "no."

Fix: For each story, stop asking "what does the interviewer want to hear?" and start asking "what would I want to hear from someone interviewing to join my team?" You'd want how they actually think, the real version including the hard parts and close calls. Give your interviewer that. Honesty about your reasoning beats any polished answer.

The bottom line of all three learnings

The people who get hired can walk into a room and tell a clear story about their work — one that makes the interviewer think "I want to work with that person." That's a skill. Skills get better with practice. Most people never practice it because they don't think of it as practiceable. A little prep here goes further than almost anything else you can do for your career.

A reader's comment in the discussion captured it well: "The debrief question I end up caring about most isn't 'did they answer well?', it's 'could I picture them on our next incident call at 2am?' If I can't form that picture after 45 minutes, it's almost always a no — no matter how polished the answers were."

Part 2 — What companies are actually looking for (excerpt from Chapter 2 of the book)

Behavioral interviews exist to answer two questions: (1) Do you fit the role and the company? (2) If yes, at what level will you be most effective?

- Get fit wrong → rejected regardless of skill.

- Get level wrong → down-leveled, or rejected for being underqualified.

- Get both right → offer at the appropriate level.

Understanding fit: role and company

Technical skill is necessary but not sufficient. Companies have learned the hard way that smart people can fail in the wrong environment. So they assess two extra dimensions:

- Role fit — Can you handle the specific challenges and working conditions of this position? A backend role at a fast-growing startup needs different things than a backend role at a large enterprise, even when the technical skills overlap.

- Company fit — Will you thrive in this organization's environment? This goes beyond surface culture into your working style, decision-making approach, and values.

How companies detect fit (through signals in your stories)

Companies can't just ask "would you fit here?" — no candidate would say no. Instead they listen for signals in your stories.

Role-fit signals come from how you describe handling situations similar to what the role requires:

- Role has ambiguous requirements? → Do your stories show comfort with uncertainty?

- Role needs cross-team coordination? → Do you show you can navigate org complexity?

- Role needs rapid iteration? → Do your examples show shipping fast and adjusting?

Company-fit signals come from the choices you made and how you describe them:

- A "bias for action" company looks for stories where you moved quickly despite incomplete information.

- A "customer obsession" company wants examples of going deep to understand user needs.

- A "radical transparency" company wants stories where you shared information openly even when it was uncomfortable.

Crucially, the same story sends different signals to different companies. Spending three weeks perfecting a solution = "attention to quality" at one place, "analysis paralysis" at another. Shipping fast and patching later = "good judgment" at a growth startup, "recklessness" at a healthcare company.

Common "mis-fits" — the seven axes

Even strong candidates get rejected when behaviors that read as positive at one company read as negative at another. The seven axes Steve highlights:

- Independence vs. Collaboration. If every story is "I went off and built it solo," consensus-driven companies worry you'll steamroll. If every story involves checking with the group first, ownership-focused companies wonder if you can decide without a meeting.

- Speed vs. Thoroughness. Startups want MVPs and iteration; healthcare/finance want validation before release. Methodical-testing stories bore startups; "ship it and fix it" stories terrify medical-device companies.

- Excellence vs. Pragmatism. Some places prize clean architecture above all; others want a working solution by deadline even if rough. Perfectionist stories fail at deadline-driven companies; tech-debt-everywhere stories fail at companies maintaining critical infrastructure.

- Innovation vs. Stability. Some roles need you to challenge existing approaches; others need you to maintain proven systems. "Constantly reinventing things" repels stability teams; "I follow established patterns" disappoints innovation teams.

- Direct vs. Diplomatic. Some cultures want radical candor; others prize harmony and face-saving. Too blunt → fails at relationship-focused companies. Not direct enough → fails at "disagree and commit" cultures.

- Data vs. Intuition. Data-driven cultures distrust gut-feel decisions; experience-driven cultures roll their eyes at three A/B tests for a button color.

- Specialist vs. Generalist. Big companies often want deep domain experts; smaller companies need people wearing multiple hats.

The takeaway: research the axis the company is on, then pick stories that match.

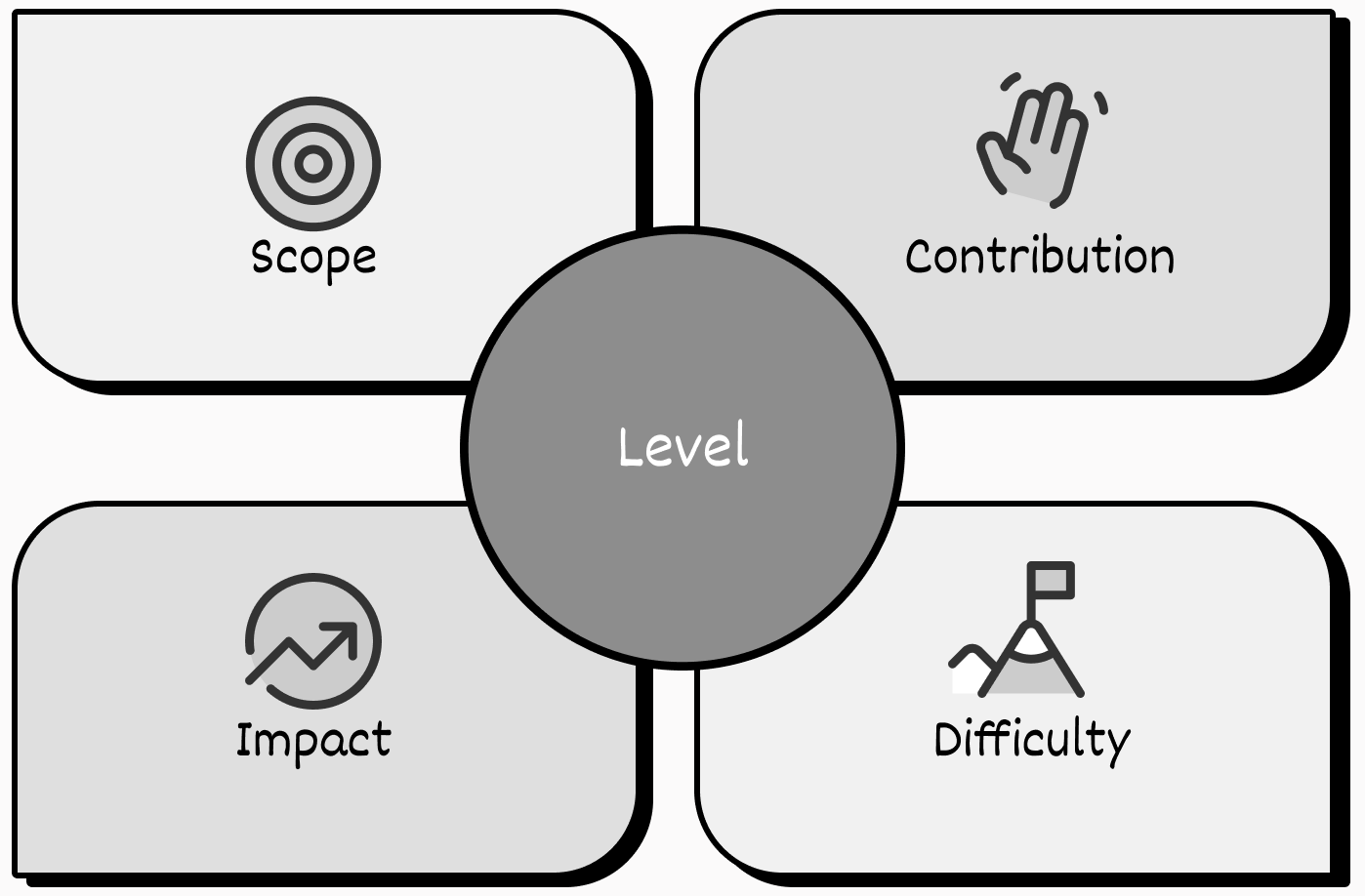

The four dimensions that determine your level

Every story you tell carries signals on four dimensions. Together they show where you operate most effectively.

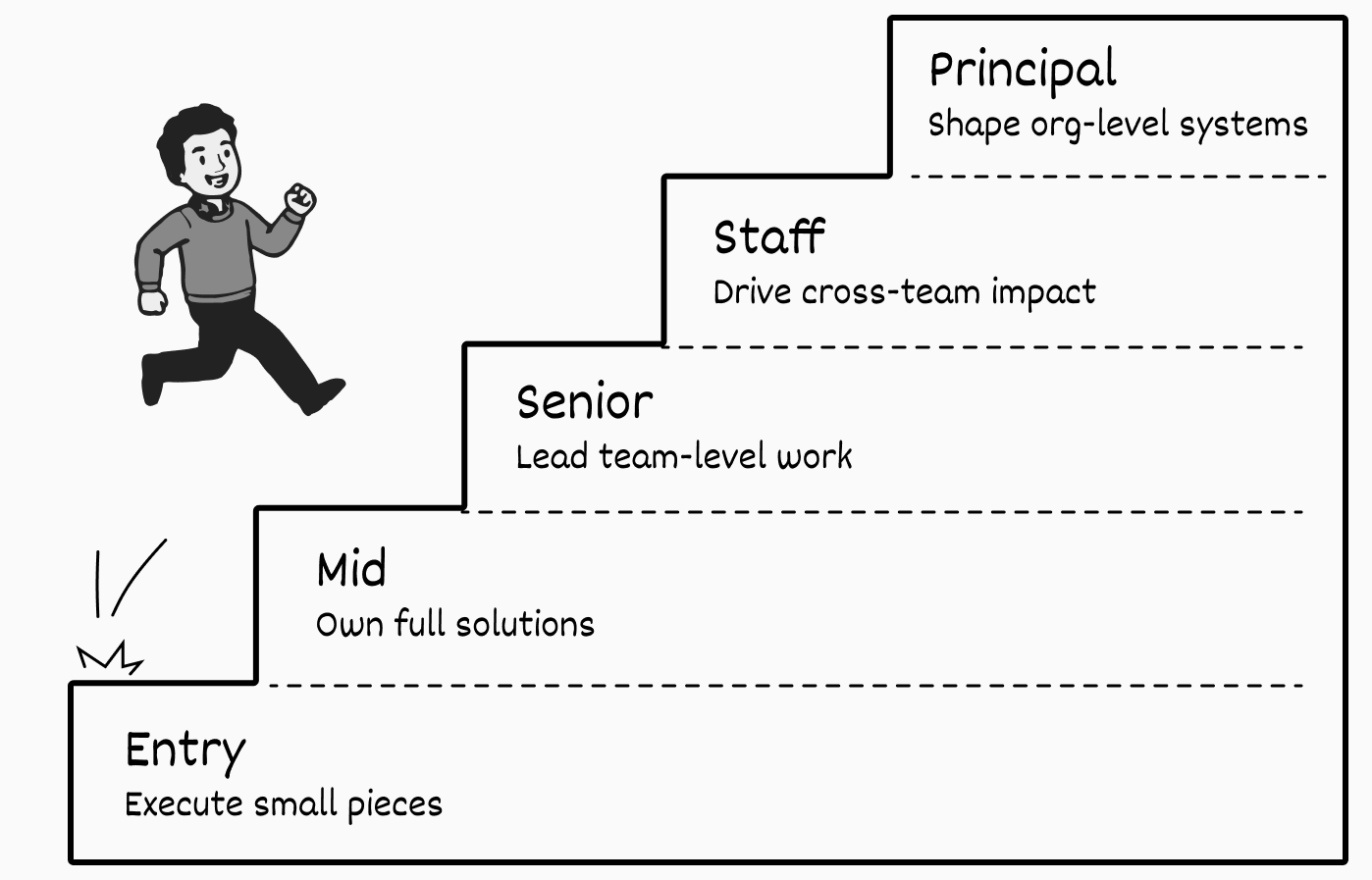

1. Scope — how many people are affected by your work

- Entry: your own productivity; starts to help a few teammates.

- Mid: shapes how a meaningful chunk of your team works.

- Senior: affects your whole team; starts to influence at least one adjacent team; collaborating closely with product/design partners.

- Staff: directly impacts ≥2 teams; starting to affect the broader division; influence extends into product, design, program management.

- Principal: affects many teams or large parts of the organization; influence extends into business strategy alongside exec leadership.

2. Contribution — what you actually did (be precise about "I" vs. "we")

- Entry: execute assigned work; own small, well-defined pieces (e.g., implement designs from others, fix bugs).

- Mid: own complete solutions from problem to implementation; help teammates understand your decisions.

- Senior: lead initiatives requiring coordination; make progress under unclear requirements; mentor, architect, drive technical decisions.

- Staff: lead cross-team initiatives; set technical direction when stakeholders have competing priorities; build systems that let other teams solve problems themselves.

- Principal: create new organizational capabilities and ways of working; define the problem before solving it; set technical standards used by dozens of teams.

3. Impact — what changed for the better, ideally with numbers tied to business outcomes

- Entry: personal productivity; minor team help (time saved, bugs prevented).

- Mid: team-level metrics (deploy times, classes of bugs eliminated in your domain).

- Senior: transforms how your team works; impact starts spilling into adjacent teams; ties to product/UX/cost outcomes.

- Staff: improves how multiple teams operate; impact tied to business metrics like revenue, retention, time-to-market.

- Principal: establishes foundations dozens of teams build on; unblocks major business initiatives; impact measured in strategic capability, not just engineering metrics.

4. Difficulty — the complexity, constraints, and trade-offs you managed

Easy problems with big impact are less impressive than hard problems solved well.

- Entry: straightforward problems within established patterns; path forward becomes clear once you understand the problem or ask for help.

- Mid: more moving parts, less obvious solutions; competing requirements; unfamiliar technical complexity; team-internal dependencies.

- Senior: team-level architectural implications; multiple interacting systems; balancing stakeholders with different priorities; addressing both technical and business factors.

- Staff: cross-team trade-offs where teams have genuinely conflicting needs; architectural decisions across diverse contexts; getting agreement when the technically optimal solution differs per team.

- Principal: novel problems with no established pattern; balancing technical excellence vs. delivery speed at organizational scale; decisions requiring exec buy-in.

What each level looks like (side-by-side)

These aren't templates; they're meant to develop your intuition for the difference between, say, a mid-level story and a senior one. Compare adjacent levels and notice what actually changes as you move up.

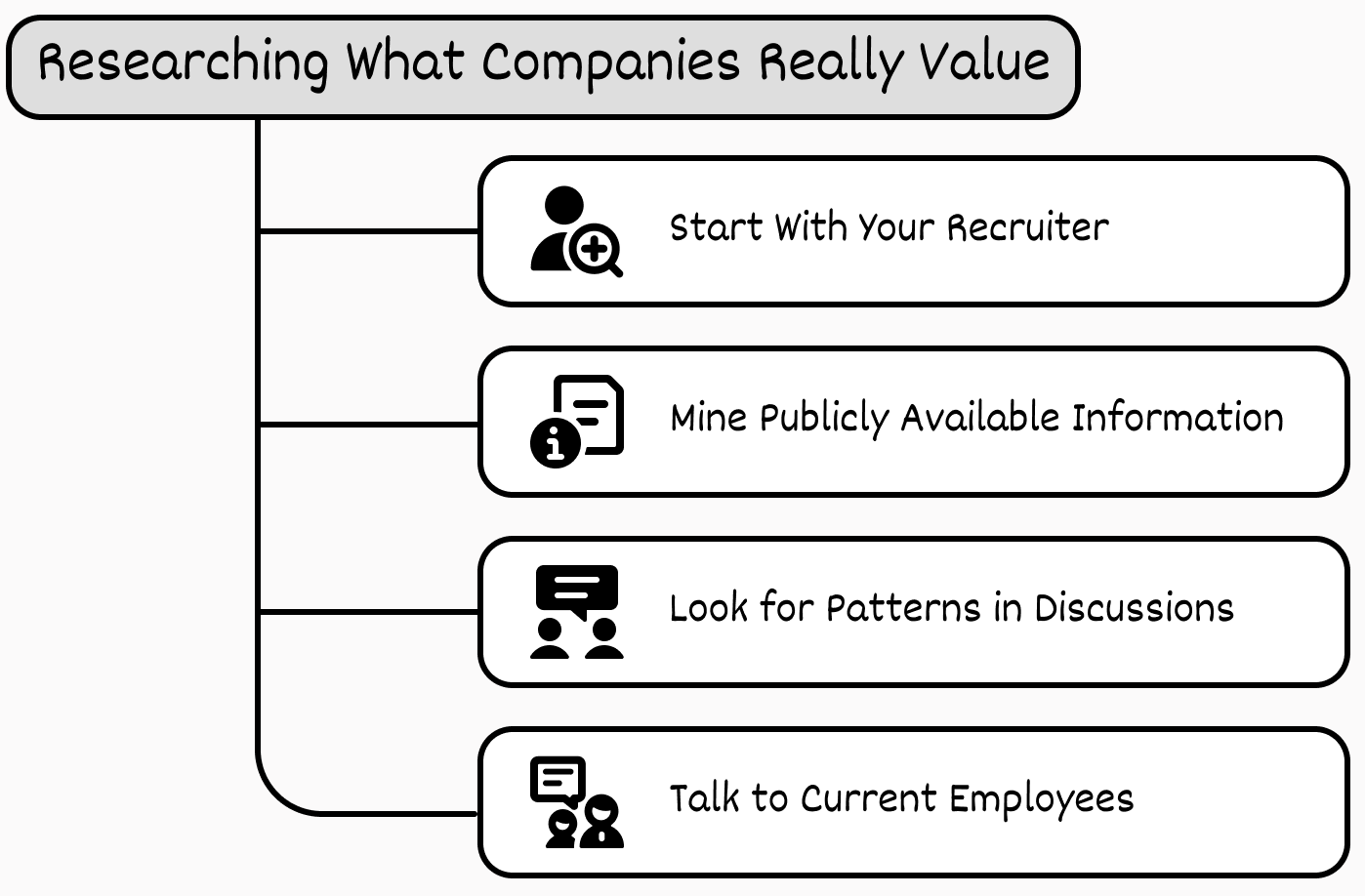

Researching what companies really value

Steve recommends four sources, in order of value:

1. Start with your recruiter. Most candidates treat recruiters as gatekeepers to get past — wasting your best insider source. Recruiters are paid on accepted offers, so they actively want you to succeed. They have prep materials, know the interviewers' focus areas, and often know the specific behavioral competencies being evaluated. Ask directly: "What should I know about the company's current challenges?" / "What competencies matter most for this role?" / "Can you share interview prep materials?" The example questions in their prep docs have a high chance of being the actual questions asked.

2. Mine publicly available information. Repeated words in job postings reveal what matters — "fast-paced" mentioned several times signals something very different from "compliance." Where to look:

- Engineering blogs — what wins do they celebrate?

- Tech talks/conferences — speed, scale, or innovation?

- Open-source choices — open-sourcing dev tools suggests community focus; willingness to publish internal tools suggests transparency.

- Public API docs — quality reveals how they support users and their own teams.

- Status pages and postmortems — detailed postmortems mean a learning-from-failure culture; published incident response = strong operational culture.

Even companies without engineering blogs leave traces: release cadence reveals development pace; bleeding-edge frameworks suggest innovation focus, while proven tech suggests stability focus.

3. Look for patterns on Glassdoor / Blind / Reddit. Ignore individual rants. Look for repeated themes — if five different people independently mention "lots of process" or "amazing learning culture," that's signal. Pay attention to both complaints and praise: complaints about "too many meetings" can mean either consensus-driven or productivity-killing (both useful to know); praise for "autonomy" means they trust people to decide without checking in. Each reveals what behaviors get rewarded.

4. Talk to current employees. Skip surface culture questions. Ask specifics:

- "When someone gets promoted here, what did they actually do to earn it?"

- "What behaviors get negative feedback?"

- "How does the team make decisions when there's disagreement?"

- "What surprised you most about working here?"

Insiders will tell you what the website never will — that "customer obsession" really means checking usage data before writing code, or that "ownership" means being on call for 2am incidents.

What you're looking for: the real intersection between your experience and what the company cares about. If they prize speed, lean on shipped-fast-and-iterated stories. If they value depth, lean on root-cause stories. If they value collaboration, emphasize cross-team work over solo wins. The research also helps you decide whether you even want this role — if everything points to behaviors you don't want to develop, maybe walk away.

Putting it all together

Companies aren't just deciding whether you can do the job. They're deciding whether you'll thrive in their specific environment and at what level you'll be most effective. Both decisions ride on your stories.

- Fit determines which experiences will resonate. The startup needs someone who ships fast and figures things out alone; the enterprise needs someone who navigates process and builds consensus. Neither is "better" — they reward different approaches.

- Level determines positioning. The same project can demonstrate entry-level execution, mid-level ownership, or senior-level leadership depending on contribution and framing. Misframe it and you'll either be rejected for overreach or down-leveled.

The goal isn't any offer — it's the right offer at the right level at the right company.

Gergely's closing thoughts

Steve's book goes deeper than this excerpt — Gergely highlights:

- High-signal storytelling (Chapter 3): a framework for making your work "stick" with the interviewer.

- 9 competencies with examples — including delivery, earning trust / dealing with conflict, and strategic thinking.

- Examples of what interviewers see as key signals, yellow flags, and red flags.

Gergely's broader point: hiring is similar to dating. Both parties show up with expectations they often don't articulate; sometimes there's a match, sometimes there isn't. As a candidate you're selling — convincing the company you fit what they want.

His Uber hiring-manager experience: roughly half of candidates did zero research on the company before getting on a call with him; only about 1 in 10 had researched the team they were interviewing for — even when the team had public blog posts about their work. Anyone who showed up prepared stood out instantly on the "motivation" axis.

He acknowledges the "if they don't want me as I am, they don't deserve me" stance is valid — but it works the same way as showing up to a first date in sweatpants: fine if you're highly in-demand, expensive otherwise. For most engineers, putting in the extra effort on behavioral prep is worth it.

Key takeaways

- Most rejections of technically strong candidates happen in the behavioral round, not the technical one. "Technical skills are the ante."

- Reallocate ~10 hours from your 80-hour prep budget to story prep — highest-leverage hours of the entire cycle.

- Practice delivery on camera. Ramble-and-stumble is the most fixable failure mode and the one that kills the most otherwise-strong candidates. Start with "Tell me about yourself" and "Why do you want to work here?"

- Treat the interview as an audition, not an exam. Show how you actually think — the close calls, the trade-offs, the messy parts — so the interviewer can picture working with you (especially "on a 2am incident call").

- Companies assess fit (role + company) and level (scope, contribution, impact, difficulty) — both via signals in your stories. Same story, different signal at different companies.

- Pick stories that match the seven fit axes (independence/collaboration, speed/thoroughness, excellence/pragmatism, innovation/stability, direct/diplomatic, data/intuition, specialist/generalist) for that specific company.

- Research the company hard: recruiter first, then engineering blogs / postmortems / OSS choices, then patterns on Blind/Glassdoor/Reddit, then current employees with specific questions.

- Goal isn't any offer — it's the right offer at the right level at the right company.

Author

Gergely Orosz & Steve Huynh (ex-Principal Engineer, Amazon)

Continued reading

Keep your momentum

MKT1 Newsletter

100 B2B Startups, 100+ Stats, and 14 Graphs on Web, Social, and Content

This is Part 2 of MKT1's three-part State of B2B Marketing Report. Where Part 1 looked at teams and leadership , Part 2 turns to what marketing teams are actually doing — what their websites look like, how they use social, and what "content fuel" they're producing. Emily Kramer u

Apr 28 · 10m

Lenny's Newsletter (Lenny's Podcast)

Why Half of Product Managers Are in Trouble — Nikhyl Singhal on the AI Reinvention Threshold

Nikhyl Singhal is a serial founder and a former senior product executive at Meta, Google, and Credit Karma . Today he runs The Skip ( skip.show (https://skip.show)), a community for senior product leaders, plus offshoots like Skip Community , Skip Coach , and Skip.help . Lenny de

Apr 27 · 7m

The AI Corner

The AI Agent That Thinks Like Jensen Huang, Elon Musk, and Dario Amodei

Dominguez opens with a claim that is easy to skim past but worth stopping on: the difference between elite founders and everyone else is not raw IQ or speed — it is that each of them has internalized a repeatable mental procedure they run on every important decision. The procedur

Apr 27 · 6m