Engineering Leadership

Salesforce is Going All-In on AI, Neglecting User Interfaces

Gregor Ojstersek

Apr 27, 2026

Salesforce is Going All-In on AI, Neglecting User Interfaces

Source: Engineering Leadership · Author: Gregor Ojstersek · Date: Apr 27, 2026 · Original article

Gregor attended Salesforce TDX 2026 in San Francisco and walked away with a clear takeaway: Salesforce, a company famous for its interface-heavy product, is now betting that the future of enterprise software is AI agents calling APIs — not humans clicking through UIs. The article shares what was announced, what Salesforce's AI Research team thinks the big trends are, and how Salesforce's own engineers are using AI day-to-day.

He talked with several Salesforce product and engineering leaders (Gary Lerhaupt, Itai Asseo, Dan Fernandez, Nick Johnston) and noted that 80–90% of all talks and workshops at TDX were AI-related. The conversation in SF generally is dominated by AI agents, MCP, vibe coding, and AI adoption.

1. Salesforce Bets on AI Agents

The thesis Salesforce is now organizing around:

Future enterprise software will be run largely by AI agents, not humans clicking through user interfaces.

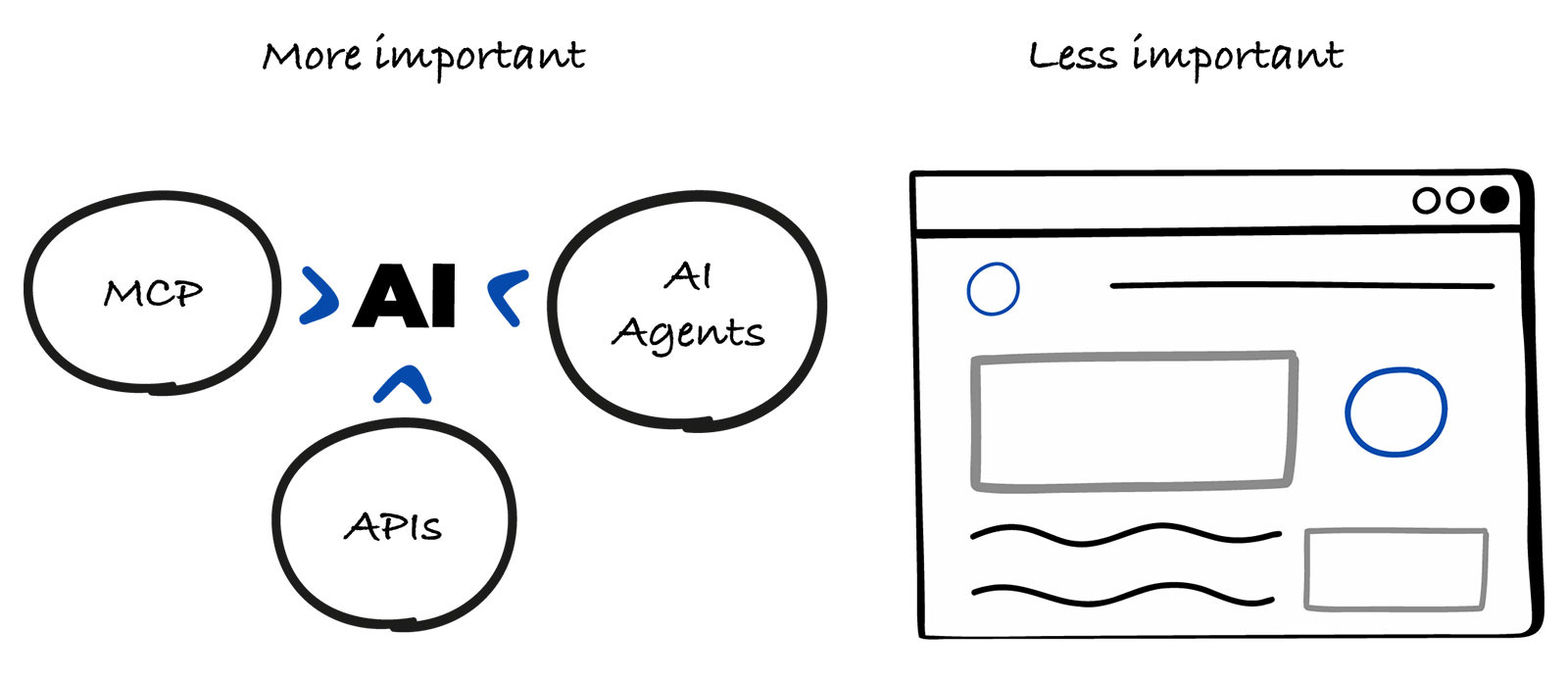

For 25 years, "using Salesforce" meant a human opening a console, clicking into a case, manually updating its status. Salesforce now thinks that in the Agentic Enterprise, the navigators are agents — and agents don't open browsers. They call APIs, invoke MCP tools, and run CLI commands. So Salesforce is shifting investment away from UI and into automation.

The strategic question this raises for any company building software today:

Is it worth investing in UI, or focus a lot more on automation?

For Salesforce, the answer is clearly automation.

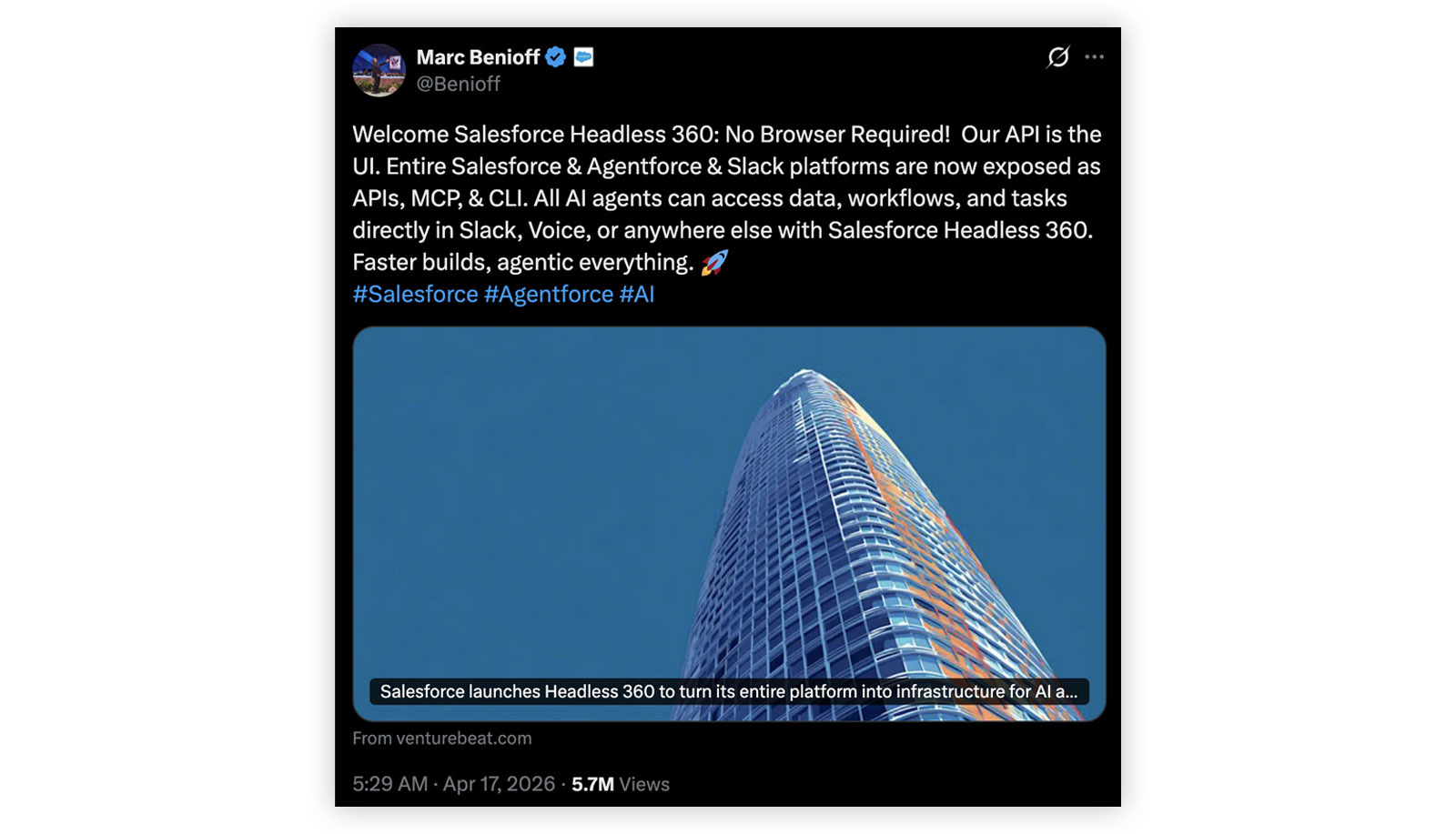

Headless 360

The flagship announcement. Its tagline gives the whole game away:

Everything on Salesforce is now an API, MCP tool, or CLI command, and agents can use all of it.

The provocative question on the announcement page is literally: "Why should you ever log into Salesforce again?" Headless 360 exposes the entire platform as machine-callable surface area so agents can drive the system end-to-end.

The author's read: the UI is becoming less important; automation is becoming Salesforce's moat.

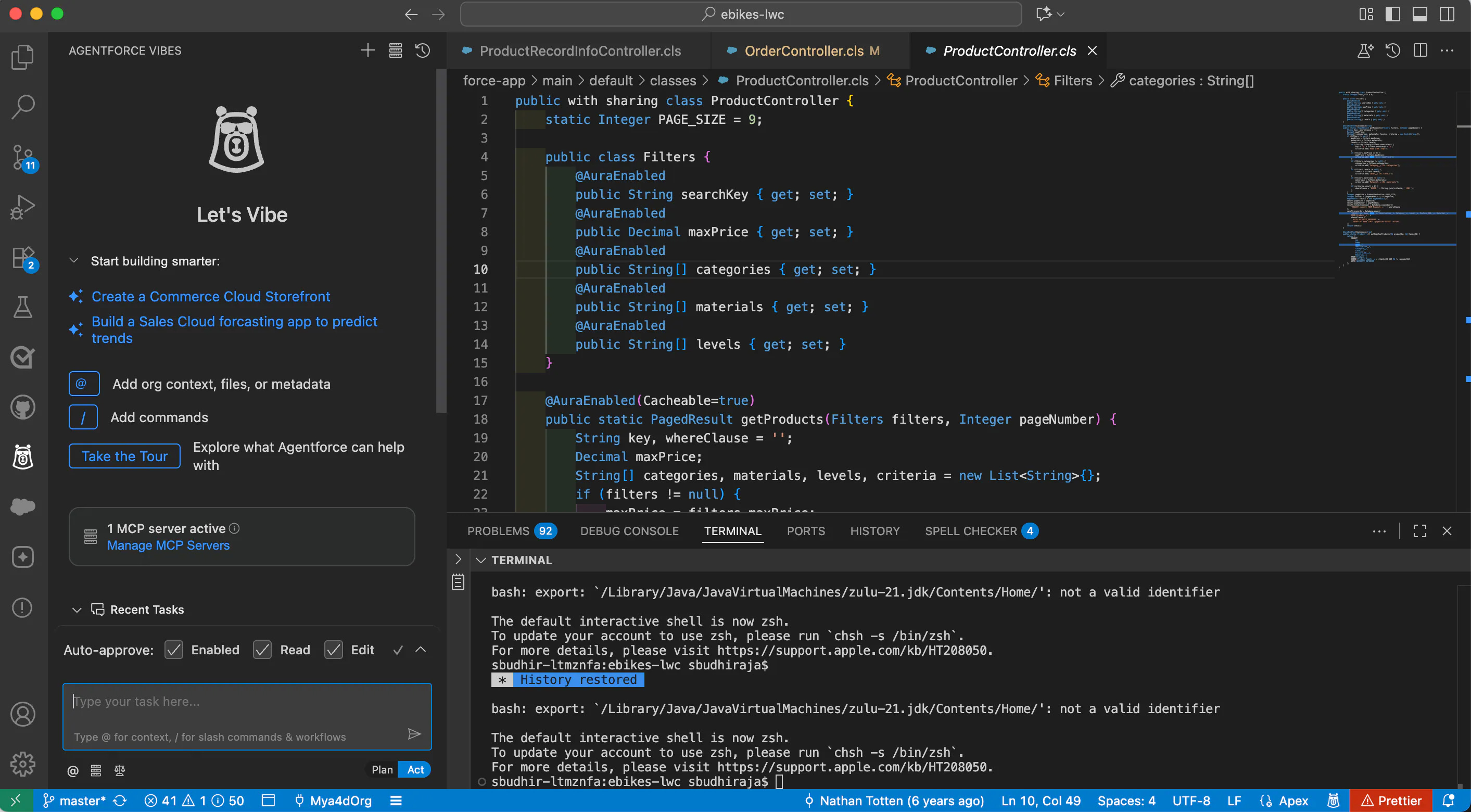

Agentforce Vibes

A new (v2.0) AI coding assistant — basically Salesforce's GitHub Copilot. Available as a VS Code extension or directly in the browser. You write prompts in the IDE and pick plan mode (it just gives you a plan) or agent mode (it actually does the work).

Gregor tried it in two workshops — building an app from requirements, and debugging a Lightning Web Component. Verdict: good if you're building on Salesforce (it's optimized for that), but for general dev work he'd still pick Cursor or Claude Code. A notable gap: there's no desktop-native app for Mac/Linux/Windows, only the VS Code extension or the browser — and most devs build in native IDEs.

Agentforce (the agent platform) — three new things

- Open-sourcing Agent Script. Notable because Salesforce historically builds proprietary stacks (LWC, Apex). Agent Script is a language for defining how an AI agent reasons and what it's responsible for. Open-sourcing it is a real change in posture.

- ADLC — Agent Development Lifecycle. A set of skills for Claude Code covering building, deploying, testing, and monitoring Agentforce agents. Interesting signal: Salesforce is shipping tooling on top of a competitor's coding agent rather than forcing everyone into their own.

- Agentforce Labs. A site where you can start building agents directly in the browser or browse example use cases.

2. Three Key AI Trends From Salesforce AI Research

Insights from a conversation with Itai Asseo, VP of Salesforce AI Research.

A useful framing he introduced: while AGI (Artificial General Intelligence) is aspirational, EGI — Enterprise General Intelligence — is the realistic and urgent target for enterprise companies. EGI means using AI tools and systems to perform business-specific tasks with high capability and consistency. That, not AGI, is what Salesforce is actually building toward, and it's why the agent-vs-UI bet makes sense.

Trend 1 — Simulation Environments

Itai's analogy:

Athletes train in safe, controlled places before real games. AI agents need the same thing.

Before you put an agent in front of real customers and real data, you need a sandbox where it can fail safely. A core piece of this is synthetic data — fake-but-realistic data you generate so the agent can practice without exposing private or sensitive real records. Think of Formula 1 drivers spending hours in simulators before getting in an actual car.

Simulations are also where you stress-test how the agent behaves: try different modalities (text, voice), different scenarios, find where it falls over, fix it, repeat. It's also a great on-ramp for companies new to AI — start in simulation, learn cheaply, then promote to production.

This is the territory of AI evals — systematically scoring AI behavior on labeled scenarios — which Gregor links to in earlier articles he co-wrote with Hamel Husain.

Trend 2 — Ambient Intelligence

The idea: AI is always on, in the background, proactively helping without you asking.

Itai's analogy is the movie Her — an omnipresent agent that's always available and takes initiative.

Concrete example Salesforce shared: PISA (Proactive In-Meeting Support Agent), an early beta for sales reps. PISA listens in the background during a sales call, recognizes topics in real time, and pushes relevant talking points to the rep — without being prompted. Another use case: a customer-service agent receiving instant, situation-specific recommendations while on a call.

The benefit is that the help fits into the work you're already doing — no context switch, no "let me go ask the AI." Gregor notes personally that getting the right recommendation without having to ask is one of the most valuable things AI can do.

Trend 3 — Agent-to-Agent Communication

As companies deploy more agents, those agents have to talk to each other, not just to humans. Today's agents are mostly designed for human conversation, so when you wire several together, things get messy or superficial. They look like they're collaborating, but the substance often isn't there.

The fix is a shared protocol or "common language" so agents stay aligned, share state, and converge on the same goal. This becomes mission-critical as orgs move from one agent to many connected agents — if the connective tissue is bad, the whole system is bad.

To pressure-test this, Salesforce ran a study on Moltbook, an AI-agent-only social platform, examining 800k posts, 3.5m comments, and 78k agent profiles. The conclusion:

Our findings reveal agents produce diverse, well-formed text that creates the surface appearance of active discussion, but the substance is largely absent.

In other words: agent-to-agent communication today is mostly theater. There's real work to do before multi-agent systems are reliable.

3. How Salesforce Engineers Actually Use AI

From Gregor's conversation with Dan Fernandez, VP of Product for Developer Services at Salesforce — who demoed all of this live from his IDE.

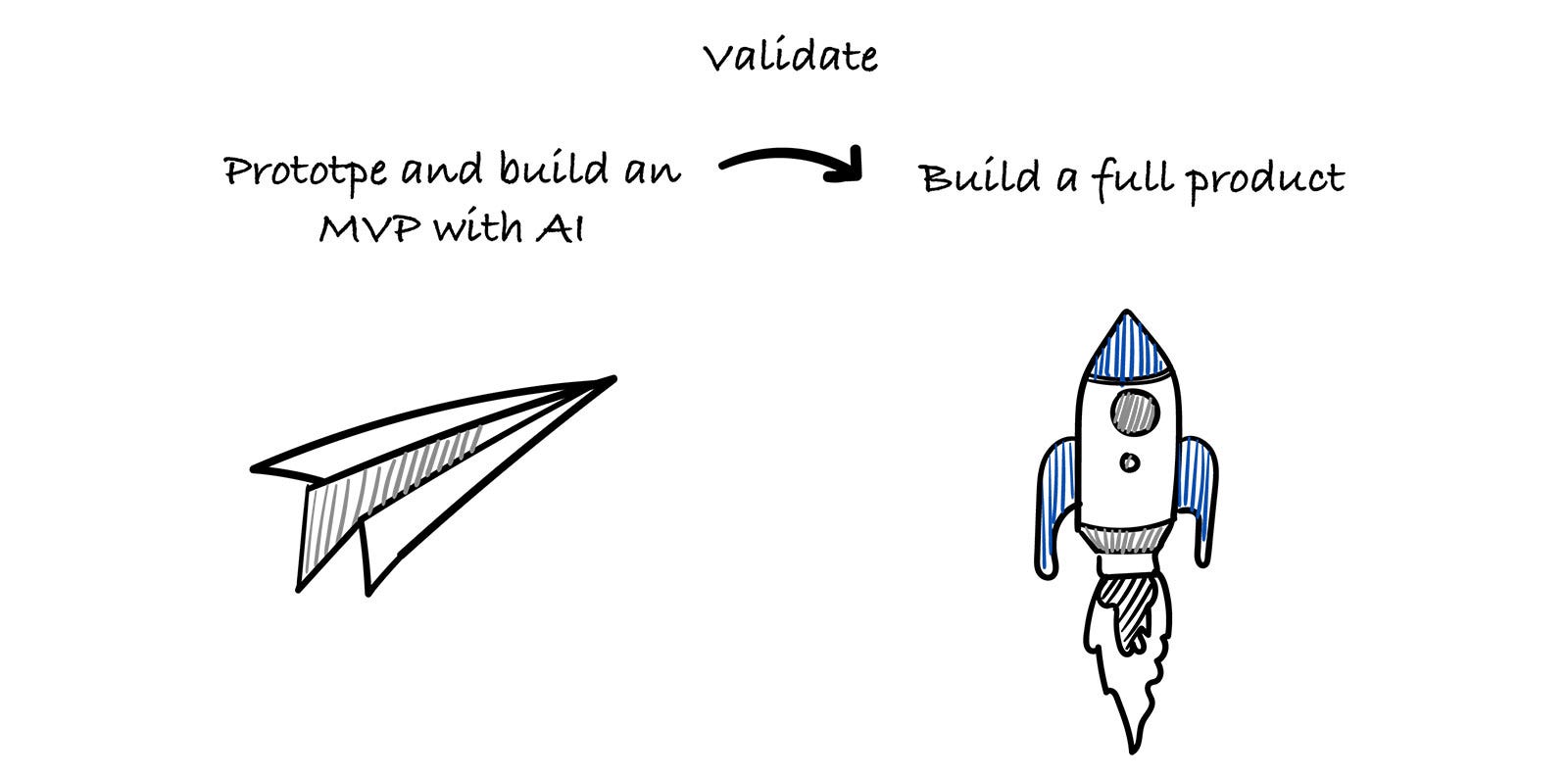

Prototyping and building MVPs

The way Salesforce builds products has changed significantly. Instead of jumping into a full build, a small cross-functional group spins up quick prototypes and MVPs with AI, gets them in front of real users, and validates the idea first.

Dan gave a vivid example: they once built a feature-rich executive dashboard meant for big screens — only to discover executives almost exclusively use their phones. AI-powered prototyping makes it cheap to find that out before committing to the wrong build.

Planning — where they use AI most heavily

They have a CLI command in their dev tools called "deep planning". It's a back-and-forth conversation that helps you think through a feature before any code is written. It asks things like:

- What do you want to build?

- How should it work?

- What are the requirements?

The explicit goal is not to generate code yet — it's to produce a solid plan. For example, if you say "asset tracker app," it'll ask what items you want to track, how the app should behave, then walk you through data, structure, and integration.

A key behavior: it checks whether something already exists that can be reused — internal tools, APIs, modules — so you don't rebuild from scratch.

Plan first, reuse what you can, and only build what's really needed.

Code quality and tests — Code Analyzer

Code Analyzer is their static-analysis tool with ~700 rules. It's homegrown but composed of multiple engines: ESLint for JS, an open-source Apex linter, plus their own logic that traces how code segments connect to API calls — so it catches structural issues, not just per-line ones.

It runs at every stage:

- while you're writing code (live feedback in the IDE),

- automatically when a PR is opened,

- in CI, on merge, and on deploy to production.

This matters because Salesforce requires 80% test coverage before code can deploy to the platform — historically a real grind. With AI generating tests, hitting that bar is dramatically easier.

Observability — Scale Center and ApexGuru

Scale Center shows real-time production performance — what's healthy, what's slow. Inside it, ApexGuru analyzes how code actually behaves in production and flags what to fix. Critically, the insights surface directly inside the developer's IDE, so you don't have to context-switch to dashboards.

The system warns proactively: hitting platform limits, performance regressions, spikes in 500 errors. And with AI on top, when something breaks it can:

- find the root cause,

- explain what went wrong,

- suggest a fix,

- open a PR with the change.

Instead of digging through logs, the engineer reviews a proposed fix.

Engineers pick their own tools

Even though Agentforce Vibes is the official Salesforce coding assistant, engineers are free to use Claude Code, Cursor, or whatever they prefer. Dan compared it to vim vs emacs — a preference debate, not a correctness debate.

Increasingly, a lot of work also happens in Slack via Slackbot — gathering requirements, making decisions, discussing trade-offs — with the AI bot helping move things forward right inside the conversation.

Takeaways

- The strategic bet: Salesforce is reorienting a 25-year-old UI-centric company around the premise that agents, not humans, are the primary users of enterprise software. Headless 360 is the concrete expression of that bet.

- EGI > AGI for enterprises. Don't wait for general intelligence — focus on doing your specific business tasks reliably with AI now.

- Three frontiers worth investing in today: simulation environments (with synthetic data), ambient/proactive AI (PISA-style), and agent-to-agent protocols — though the last is still immature, per Salesforce's own research.

- Internal AI usage pattern: prototype to validate ideas, use AI heavily in planning (not just coding), keep code quality enforced via continuous static analysis, and bring observability + auto-fix into the IDE. Reuse before you build.

- Tooling pluralism wins. Even Salesforce, with its own coding agent, lets engineers pick the tool that fits — and ships skills for Claude Code, a competitor.

The open question for every other enterprise: if Salesforce is right that interfaces matter less and automation is the moat, how much should you still be investing in UI vs. agent-callable surface area?

Author

Gregor Ojstersek

Continued reading

Keep your momentum

MKT1 Newsletter

100 B2B Startups, 100+ Stats, and 14 Graphs on Web, Social, and Content

This is Part 2 of MKT1's three-part State of B2B Marketing Report. Where Part 1 looked at teams and leadership , Part 2 turns to what marketing teams are actually doing — what their websites look like, how they use social, and what "content fuel" they're producing. Emily Kramer u

Apr 28 · 10m

Lenny's Newsletter (Lenny's Podcast)

Why Half of Product Managers Are in Trouble — Nikhyl Singhal on the AI Reinvention Threshold

Nikhyl Singhal is a serial founder and a former senior product executive at Meta, Google, and Credit Karma . Today he runs The Skip ( skip.show (https://skip.show)), a community for senior product leaders, plus offshoots like Skip Community , Skip Coach , and Skip.help . Lenny de

Apr 27 · 7m

The AI Corner

The AI Agent That Thinks Like Jensen Huang, Elon Musk, and Dario Amodei

Dominguez opens with a claim that is easy to skim past but worth stopping on: the difference between elite founders and everyone else is not raw IQ or speed — it is that each of them has internalized a repeatable mental procedure they run on every important decision. The procedur

Apr 27 · 6m