Strategize Your Career

Is AI Making You a Worse Developer? Two 2026 Studies Say Yes — Unless You Steer

Fran Soto

Apr 26, 2026

Is AI Making You a Worse Developer? Two 2026 Studies Say Yes — Unless You Steer

Source: Strategize Your Career · Author: Fran Soto · Date: Apr 26, 2026 · Original article

The uncomfortable opening

A common, almost reassuring, mental shortcut for AI-assisted coding goes like this: the code is there, the tests pass, the feature works — what else is there to check? Fran Soto argues that question is exactly where the rot starts. Working code is not the same as correct code, and correct code is not the same as maintainable code. AI, he says, doesn't make developers lazy by force; it creates conditions where laziness is the path of least resistance. Once an LLM produces code that does what you asked, going back inside it feels like a chore — and that chore is precisely where the real bugs live.

He's not theorizing — he's describing what he watches himself and his Amazon teammates do, and now there's data backing it up.

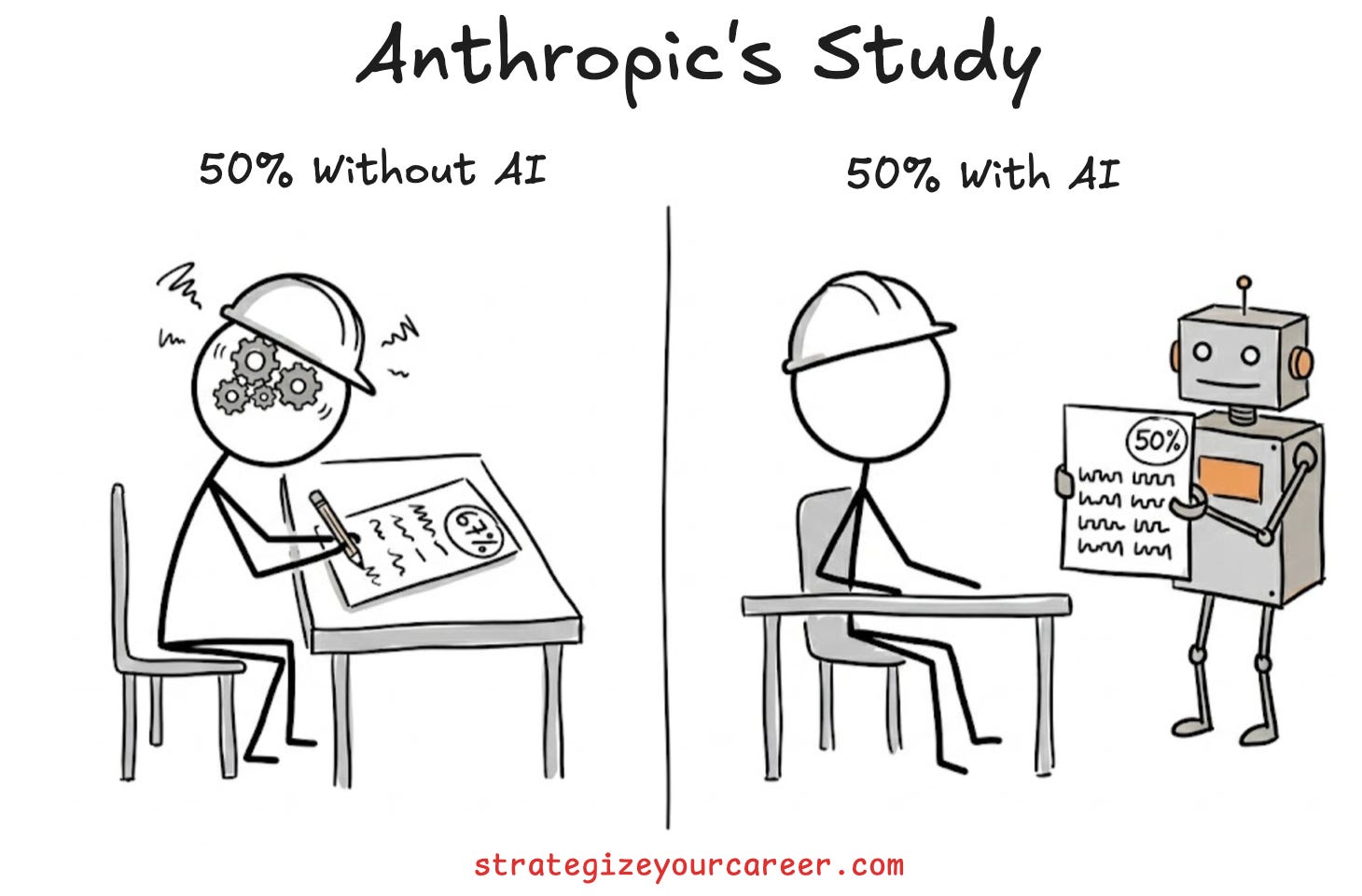

What Anthropic's 2026 randomized controlled trial actually found

In January 2026 Anthropic ran an RCT with 52 junior Python engineers (1–3 years experience). Each had to learn Trio, an async Python library none of them had seen before. Half used Claude throughout the learning process; half worked manually, the way developers learned before AI existed.

Results:

- Time to finish: AI group was ~2 minutes faster. Not statistically significant.

- Comprehension quiz: Manual group 67%, AI group 50%. That's two full letter grades — a real, large, statistically significant gap (Cohen's d ≈ 0.738).

- Worst category for the AI group: debugging — i.e., the exact skill that requires you to actually understand what was built.

Anthropic named the underlying phenomenon the illusion of competence: developers feel fast and capable while failing to internalize what they built. The code runs, but no mental model of it exists in their head.

The point isn't "AI bad." It's that the speed feels like progress while the understanding quietly evaporates.

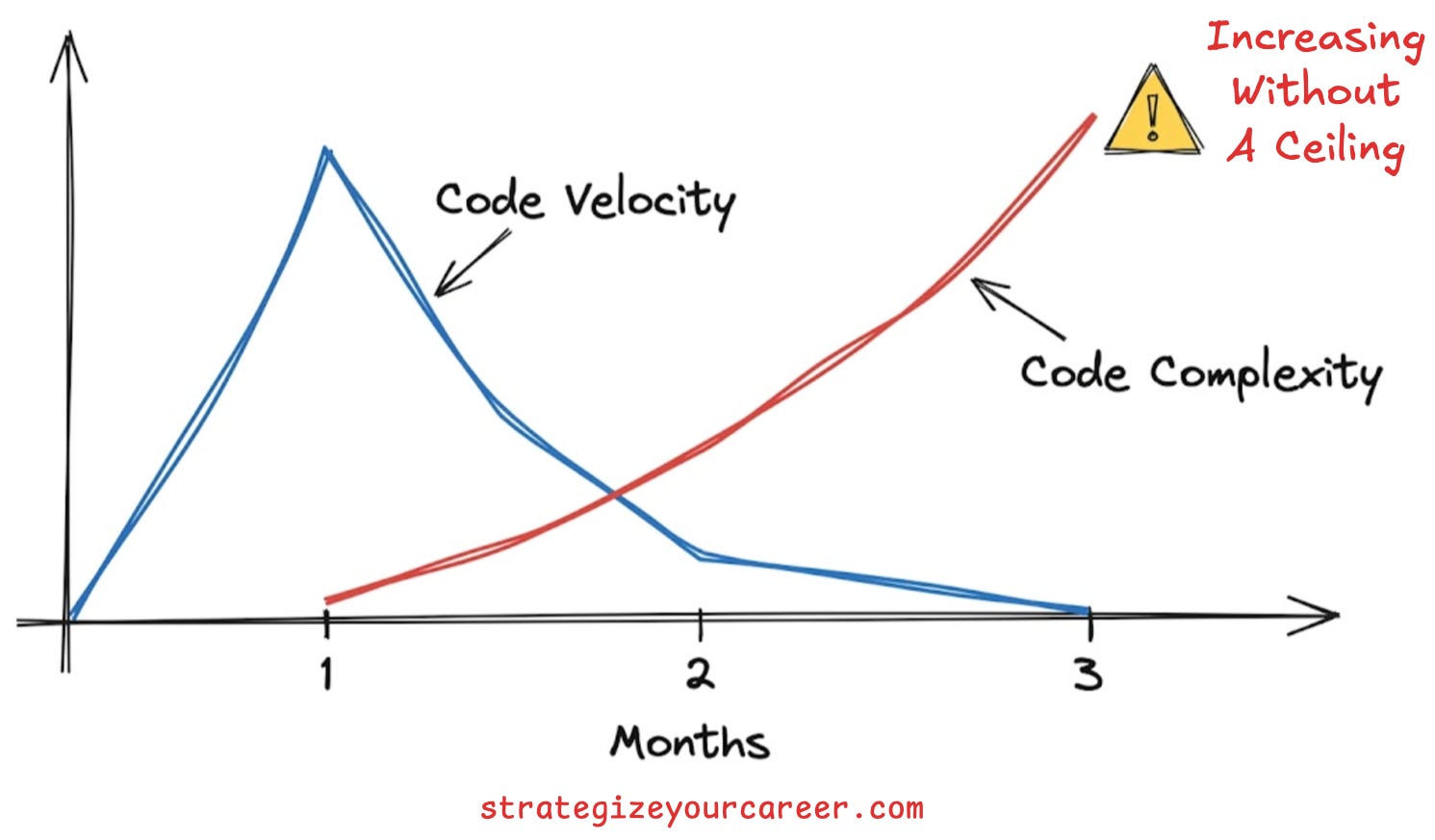

What Carnegie Mellon's 807-repo study found in real codebases

If Anthropic's study is about what happens in your head, the Carnegie Mellon study is about what happens in your codebase. Researchers ran a difference-in-differences analysis across 807 repositories that adopted Cursor between Jan 2024 and Aug 2025, against 1,380 matched control repos that didn't. Quality was measured with SonarQube — a static analyzer most CI pipelines already use, so the metrics aren't exotic.

What they found:

- Month 1 after adoption: a 3–5× spike in lines of code written. Big initial burst.

- By month 3: velocity fully reverts to baseline. No sustained gain.

- Security / static-analysis warnings: +30%.

- Code complexity: +41% — growing disproportionately faster than the volume of code itself.

The researchers call this complexity debt, and Soto's analogy is the one to remember: AI speed is a loan against future maintenance hours. The interest doesn't announce itself — it compounds quietly until someone has to touch that code and the session turns into archaeology. The pattern is exactly what you'd predict if a tool lets people write code faster than they can understand it: more code, more warnings, more complexity, no lasting velocity gain. Faster input, worse output.

The yes/no framing is wrong — there are three modes

Soto reframes the question. AI does make developers lazy, but which kind of lazy depends entirely on how you interact with it. He identifies three modes:

1. Blind Delegation ("the Self-Automator") — the default

Describe what you need → AI generates → check it runs → ship. Fastest interaction pattern. Also the one that produced sub-40% comprehension scores in the Anthropic study among heavy users. A Boston Consulting Group study found a parallel result: pure-delegation engineers developed neither domain skills nor meaningful AI skills. They got faster at prompting and slower at thinking.

Soto's lived examples from work:

- Solutions built before the problem was even defined.

- Documents that contain no actual decisions.

- Code that solves a problem the team doesn't have.

The tell is the silent assumption "it compiles, it works." That's the illusion of competence in plain English.

2. Manual (No AI) — the baseline

The manual group's 67% comprehension is the baseline. It's slow, metabolically expensive, and cognitively demanding. But it's the only mode that forces schema formation — the cognitive process of building an internal mental structure of how something works, not just what its output looks like. Senior intuition, architectural judgment, and debugging ability all come from schemas built by repeated, difficult cognitive effort.

Cognitive psychologists call this the "desirable difficulties" principle: the struggle isn't a bug in learning, it's the feature. When something is hard to process, the brain encodes it more durably. Remove the friction, and you remove the encoding too.

Manual mode isn't required for every task — but for any concept you actually need to own, it's non-negotiable.

3. AI for Learning (Socratic Mode) — the rare-but-best mode

Restrict the AI from writing the solution. Use it instead to explain, question, and guide while you do the writing. Soto cites a 19.6% improvement in learning outcomes vs. traditional methods, plus a LearnLM experiment showing an additional 5.5% improvement on novel problem-solving tasks.

The defining constraint: the AI does not write the code you ship. You ask AI to explain a concept, form your own hypothesis, write the code yourself, then ask AI to critique it. This is to Blind Delegation what "checking your approach with peers" is to "letting peers solve everything for you."

The 6 interaction patterns from the Anthropic study

Anthropic identified six concrete interaction patterns. Three hurt comprehension, three help.

Detrimental:

- AI Delegation — prompt, copy, ship. Zero comprehension transfer.

- Progressive Reliance — start manual, escalate to full AI when stuck. Rewires your problem-solving instinct toward giving up.

- Iterative Debugging — paste the error back to AI without reading it. Trains helplessness, not debugging skill.

Beneficial:

- Generation-Then-Comprehension — AI generates, then you explain every line before shipping. Forces schema formation after the fact.

- Hybrid Code-Explanation — alternate between writing code and explaining what you built, keeping understanding in-head.

- Conceptual Inquiry — ask AI to explain the concept, not write the solution. Build the mental model first.

Most engineers drift into the detrimental column without noticing. The beneficial patterns require deliberate choice every session.

7 laziness patterns Soto sees in real engineers

These are the field-observed expressions of the studies' findings.

-

Auto-generating documentation nobody will read. You can tell AI wrote it because every step that would require actual thinking got skipped. Structure is there, analysis isn't. His content-effort analogy: a TikTok takes ~1 hr, a YouTube video or newsletter ~10 hrs, a book ~1 yr — same author, but the book is the better source because of the effort poured in. Same with internal docs: if the author didn't invest the time to think, no reader will invest the time to engage. Output without ownership = noise.

-

Building proposals without investigating the problem. AI can spit out 10 features in minutes — UI, knowledge base, automated reviews, one-stop app, etc. — but none of the foundational questions get answered: Who owns this data? Who keeps it current? What problem does this solve that existing tools don't? Building is cheaper with AI but not free, and maintaining still requires humans. At any time a team has exactly one bottleneck; find it, fix it, then move to the next. Scope without substance.

-

Skipping review of AI-generated code. Once it appears to work, the instinct is to move on. But AI errs at the conceptual level, not just syntactic. A function can compile, pass tests, and still be fundamentally wrong on the edge case that matters — and the happy path won't surface it. Working ≠ correct. Correct ≠ maintainable.

-

Trusting AI output without verification. AI's confidence is dangerous: it doesn't hesitate when it's wrong, and the output looks identical whether it's right or subtly broken. Because it speaks like a teammate, we trust it like one. "AI handled it, I can move on" is the illusion of competence. You can't push responsibility onto AI — the code is still yours.

-

Auto-generating comparatives without understanding what's being compared. Soto contrasts two scenarios. In one, he used AI to sharpen a database-tech comparison whose tradeoffs he'd already reasoned through — fine. In the inverse, an engineer produces a comparison table they can't defend because the AI did the reasoning. A comparative you can't explain isn't yours; it belongs to the AI, and the AI doesn't show up to the design review.

-

Generating code too fast to understand the system. When something breaks later, you're starting from zero in a system you thought you knew. Better path: dig deep into how the system works before generating; automate code generation only on the parts you already understand. Velocity debt — generating code fast isn't the same as moving fast.

-

Using AI as an excuse for low-quality work. Soto's pushback is sharp: the "AI = bad quality" complaint pre-dates AI. The real cause is unreasonable deadlines. Don't say "if we use AI it'll be low quality" — say "if we move this fast, quality drops, or we need more people." Don't blame the tool for a decision a human made. Outsourced accountability.

Why juniors get hit harder: the Expertise Reversal Effect

The same tool has opposite effects depending on experience level — one of the most under-discussed findings in the research.

-

For novices: AI acts as a separate, external brain. It stores outputs the junior never built internally. It skips the cognitive struggle that forms schemas. Result: fast output, shallow understanding — illusion of competence in its purest form. No schema → no debugging intuition → no path to senior.

-

For experts: AI acts as an extension of their brain. It offloads boilerplate and syntax (extraneous cognitive load — the mechanical stuff that doesn't need judgment) while architecture, logic, and system understanding stay in-head. The thinking already happened before the prompt; AI just removes friction.

The structural consequence is already showing up: entry-level compensation pressure as AI-assisted juniors flood the market, and a worsening shortage of engineers who can genuinely architect complex systems. If AI prevents juniors from doing the hard cognitive work that produces seniors, the talent pipeline breaks at the source — faster juniors who never become seniors. Five years out, that's a leadership-supply problem for every org.

The Explainability Gap — the one metric to watch

Definition: the distance between the complexity of the AI-generated code and your own conceptual understanding of it. It's a direct proxy for whether you're shipping code you actually own.

Two-minute self-check: after any AI-assisted session, close the AI tool. Explain what you just built — out loud or in writing — in plain language. Not the output. The logic. Why does this function behave this way? What happens when this edge case hits? What assumption is baked into this design?

If you can't, the gap is open.

This pairs with an MIT Media Lab EEG study: brain activity scales inversely with AI autonomy. Higher AI autonomy → reduced neural signal in the prefrontal cortex (the reasoning/decision region). The "flow" feeling during AI-assisted coding may literally be your brain switching off rather than speeding up.

The Stack Overflow Test

Stack Overflow always had two modes: copy-paste-and-move-on, or read-understand-adapt-and-own. AI is the new Stack Overflow; the same fork applies. Auto-accepting everything = Blind Delegation. Iterating with AI until you can explain the result = Generation-Then-Comprehension.

The test before shipping: Can I explain this code back to myself without looking at the AI output? Yes → you own it. No → you're shipping someone else's work under your name.

7 habits to use AI daily without going lazy

- For new concepts, ask AI to explain, not generate. Socratic mode is the only mode that builds a usable mental model on first contact.

- After generating, rewrite the key logic in your own words before shipping. One paragraph, plain language. If you can't write it, you don't understand it.

- Never paste an error back to AI without reading it first. Read it, form a hypothesis, then ask AI to help test the hypothesis. Don't delegate diagnosis — even when AI is faster, you lose the edge-case understanding.

- Treat AI confidence as marketing, not signal. The model doesn't know when it's wrong; you have to be the checker.

- Write something without AI from time to time. Not for productivity — to keep schema-formation pathways active. Like using a calculator daily but still doing brain-training to keep mental math alive.

- Watch your Explainability Gap. Close the AI window and try to explain. Re-reading wasn't understanding when you were a student; it isn't now either.

- In a new codebase or concept, restrict AI to explanation mode. That's the schema-formation period. Don't skip the struggle.

Common questions (condensed)

- Does AI make developers lazy? Per Anthropic 2026, AI-assisted juniors scored 17% lower on comprehension, statistically significant (d = 0.738). Default interaction patterns trend toward detrimental outcomes; whether you go that way depends on how you use the tool.

- Is vibe coding bad for juniors? Yes — Expertise Reversal Effect. It's not a shortcut; it's a detour around the work that creates expertise.

- How do seniors use AI differently? They offload extraneous load (boilerplate, syntax, formatting) while keeping intrinsic load (architecture, logic, edge-case reasoning) in-head. AI removes friction from work they already understand.

- Can you still learn to code with AI? Yes — only in Socratic mode (~19.6% better than no AI). Generate-then-copy mode produces worse outcomes than no AI at all.

Bottom line

The illusion of competence isn't something that happens to you — it's a decision you make the moment the code runs and you choose to move on. The fix is annoyingly simple: decide to look at the code you're about to ship, every time. The developers who stay sharp aren't the ones using AI less. They're the ones who never stop owning what they build.

Key takeaways

- AI-assisted juniors scored 17% lower on comprehension (Anthropic 2026 RCT, large effect).

- AI tooling adoption raised code complexity by 41% and security warnings by 30%, with no sustained velocity gain past month 3 (Carnegie Mellon, 807 repos).

- Three modes: Blind Delegation (detrimental), Manual (builds schemas), Socratic (best learning outcomes). Most default to the first.

- Expertise Reversal Effect: AI amplifies senior judgment but blocks juniors from forming the judgment in the first place.

- Explainability Gap is the one metric: if you can't explain it with the AI window closed, you don't own it.

Author

Fran Soto

Continued reading

Keep your momentum

MKT1 Newsletter

100 B2B Startups, 100+ Stats, and 14 Graphs on Web, Social, and Content

This is Part 2 of MKT1's three-part State of B2B Marketing Report. Where Part 1 looked at teams and leadership , Part 2 turns to what marketing teams are actually doing — what their websites look like, how they use social, and what "content fuel" they're producing. Emily Kramer u

Apr 28 · 10m

Lenny's Newsletter (Lenny's Podcast)

Why Half of Product Managers Are in Trouble — Nikhyl Singhal on the AI Reinvention Threshold

Nikhyl Singhal is a serial founder and a former senior product executive at Meta, Google, and Credit Karma . Today he runs The Skip ( skip.show (https://skip.show)), a community for senior product leaders, plus offshoots like Skip Community , Skip Coach , and Skip.help . Lenny de

Apr 27 · 7m

The AI Corner

The AI Agent That Thinks Like Jensen Huang, Elon Musk, and Dario Amodei

Dominguez opens with a claim that is easy to skim past but worth stopping on: the difference between elite founders and everyone else is not raw IQ or speed — it is that each of them has internalized a repeatable mental procedure they run on every important decision. The procedur

Apr 27 · 6m