System Design One (#142)

29 LLM Evaluation Concepts Every Engineer Needs to Know

Anshuman Mishra & Neo Kim

Apr 27, 2026

29 LLM Evaluation Concepts Every Engineer Needs to Know

Source: System Design One (#142) · Authors: Anshuman Mishra & Neo Kim · Date: Apr 27, 2026 · Original post

You ship an LLM feature. It passes your manual tests. A day later, a user posts a screenshot of it hallucinating wildly. You tweak the prompt, re-run, and it works fine. Did you fix it, or did you just get lucky?

That is the central frustration of LLM engineering: you can no longer just run a test and call it done. It is not a debugging problem — it is a measurement problem. And measurement has a name: evaluation.

This piece walks through 29 vocabulary terms, methods, and mental models — written for software engineers who already know how to ship, but are new to the ways LLMs fail. By the end you should have a framework you could use to build an eval system from scratch.

Why your old testing instincts mislead you

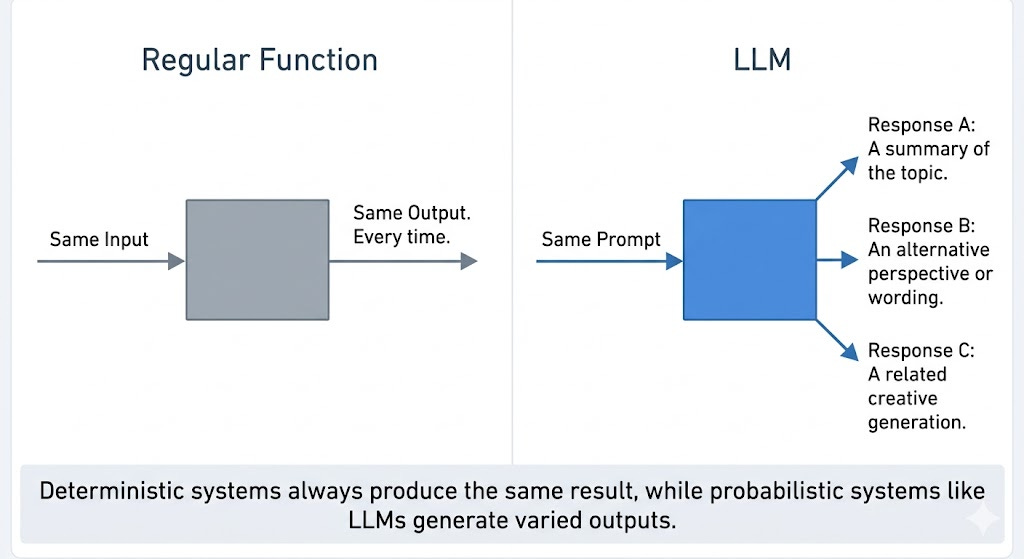

1. The non-determinism problem

Write a function, call it twice with the same input, get the same output twice. LLMs do not work that way: the same prompt can produce different responses on every run — sometimes slightly different, sometimes wildly different. This is not a bug; it is controlled by temperature (more on that later).

This breaks your mental model of testing. With code, a passing test is a verdict. With LLMs, a passing test is just a single data point — you are sampling from a probability distribution, and one sample tells you almost nothing.

2. The fuzzy-correctness problem

When a regex matches, it matches. Binary, objective, done. But what is the “correct” response to “summarize this support ticket empathetically”? The most concise one? The warmest? The one that captures all the facts? The one a human reviewer scores highest?

LLM quality is multi-dimensional and subjective. There is rarely one correct answer; there is a range of acceptable answers and a range of bad ones, and the line between them is a judgement call. You cannot measure what you have not defined — most teams skip the step of writing down what “good” means for their use case, and it haunts them later.

3. The silent-regression problem

You change a prompt, run it a few times, the outputs look better, you ship. But did quality actually improve, or did you get lucky with the samples you eyeballed?

In traditional software, CI catches regressions before they hit users. LLM engineering has no equivalent by default — so people fall back on gut feeling and spot checks, and find out about regressions when a customer complains. Without a systematic eval process, every prompt change is a blind bet: you might be fixing one failure mode while quietly introducing another.

These three problems are why LLM evaluation is its own discipline.

Primitives of eval

These six terms come up constantly. Learn them and the rest falls into place.

4. Criteria

Criteria are the dimensions of quality that matter for your specific use case.

A customer-support bot might care about: does it address the actual issue, is the tone empathetic, does it avoid escalating unnecessarily? A code-generation tool cares about completely different things: is the output syntactically valid, does it follow project conventions, does it actually solve the problem?

Same technology, entirely different criteria. This is a product decision, not a technical one — you cannot outsource it to your model or eval framework. Someone has to sit down and answer: what does a good output actually look like here? Get this wrong and everything downstream measures the wrong thing.

5. Quality dimensions

Standard industry vocabulary for talking about LLM output quality. Five that come up constantly:

- Relevance — Did the output address what was actually asked? A response can be accurate, well-written, and completely beside the point.

- Coherence — Does it hold together logically? No contradictions, no mid-sentence topic shifts.

- Factual accuracy — Is what it says actually true? Distinct from relevance: a response can be relevant and wrong.

- Helpfulness — Does it give the user what they need to move forward? The gap between a technically correct answer and a useful one.

- Safety — Does it avoid harmful, biased, or inappropriate content? Matters more in some domains than others, but it always matters.

6. Rubric

Once you have criteria, you operationalize them with a rubric. A vague criterion like “helpfulness” gets broken into specific, scorable questions: Does it directly answer? Does it avoid unnecessary hedging? Is it under 200 words? Can a non-technical user understand it?

Think of it like a code-review checklist. Instead of “Is this good code?” you ask: Are there tests? Is the function under 30 lines? Are variable names descriptive? The rubric is what makes evaluation reproducible — two reviewers (human or AI) should arrive at similar scores. Without one, every evaluation is just somebody’s opinion.

7. Test cases

A test case is an input/output pair forming one unit of evaluation. The input is a prompt, ideally one representative of real user traffic. The output is either a reference answer (what good looks like) or the live model output you’re about to score.

Like unit tests — except a failing test case does not mean the output was wrong; it means it scored below your threshold on your rubric. You need a lot of them. A handful gives you anecdotes; a few hundred gives you signal.

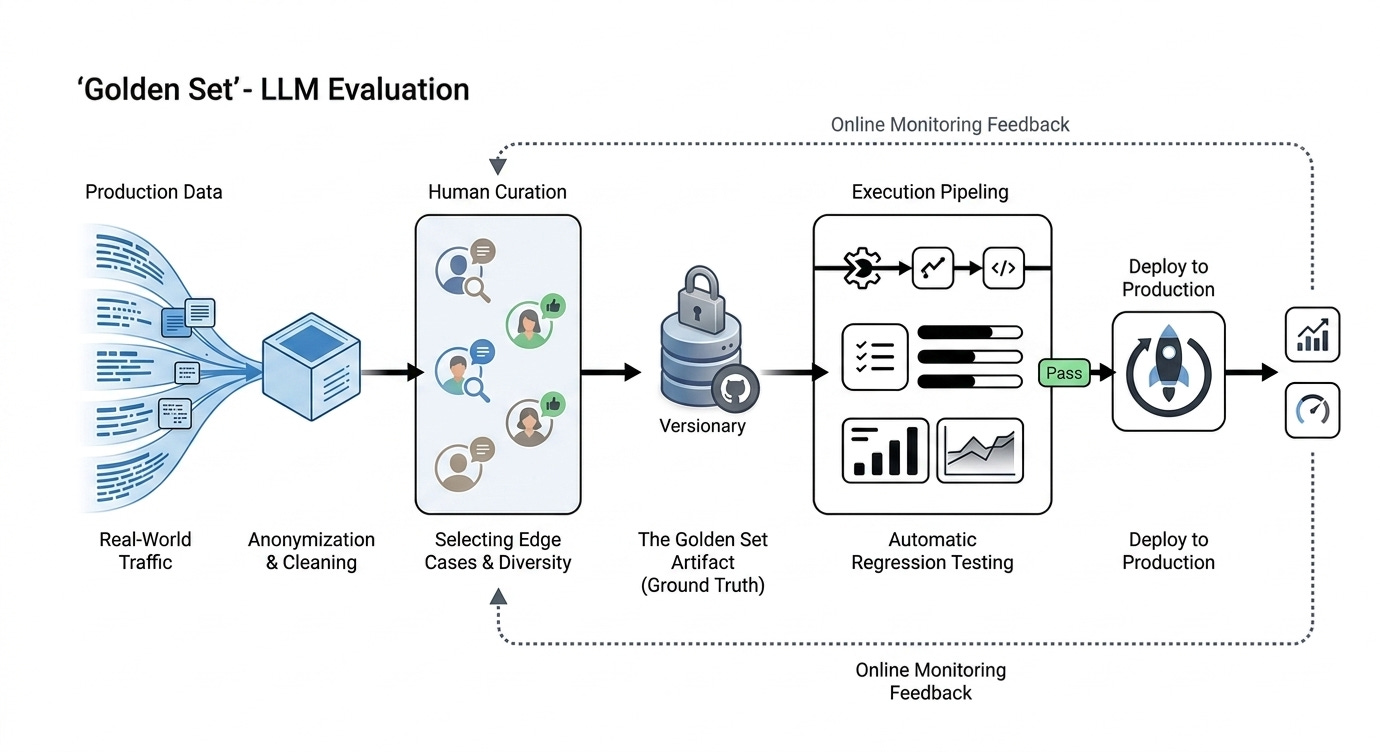

8. Golden set

Everything in your eval is measured against your golden set — a curated collection of high-quality test cases.

The instinct is to invent examples yourself. That is a start, but users phrase things differently than you expect, hit edge cases you did not imagine, and misuse features creatively. A golden set built from your imagination reflects your imagination, not your users’. Seed it with real production queries — anonymized, cleaned, representative. Treat it like a critical system artifact: version it, and update it whenever you discover new failure modes.

9. Pass/fail threshold

Eval scores are rarely binary; rubrics produce 1–5, 0–10, or percentages. The threshold is what converts a score into a decision. If your scale is 1–5 and threshold is 3, anything below 3 fails.

Setting the threshold is a product call. It depends on how much imperfection your users tolerate, how severe failures are in your domain, and the cost of false positives. Too low: you ship garbage. Too high: you ship nothing.

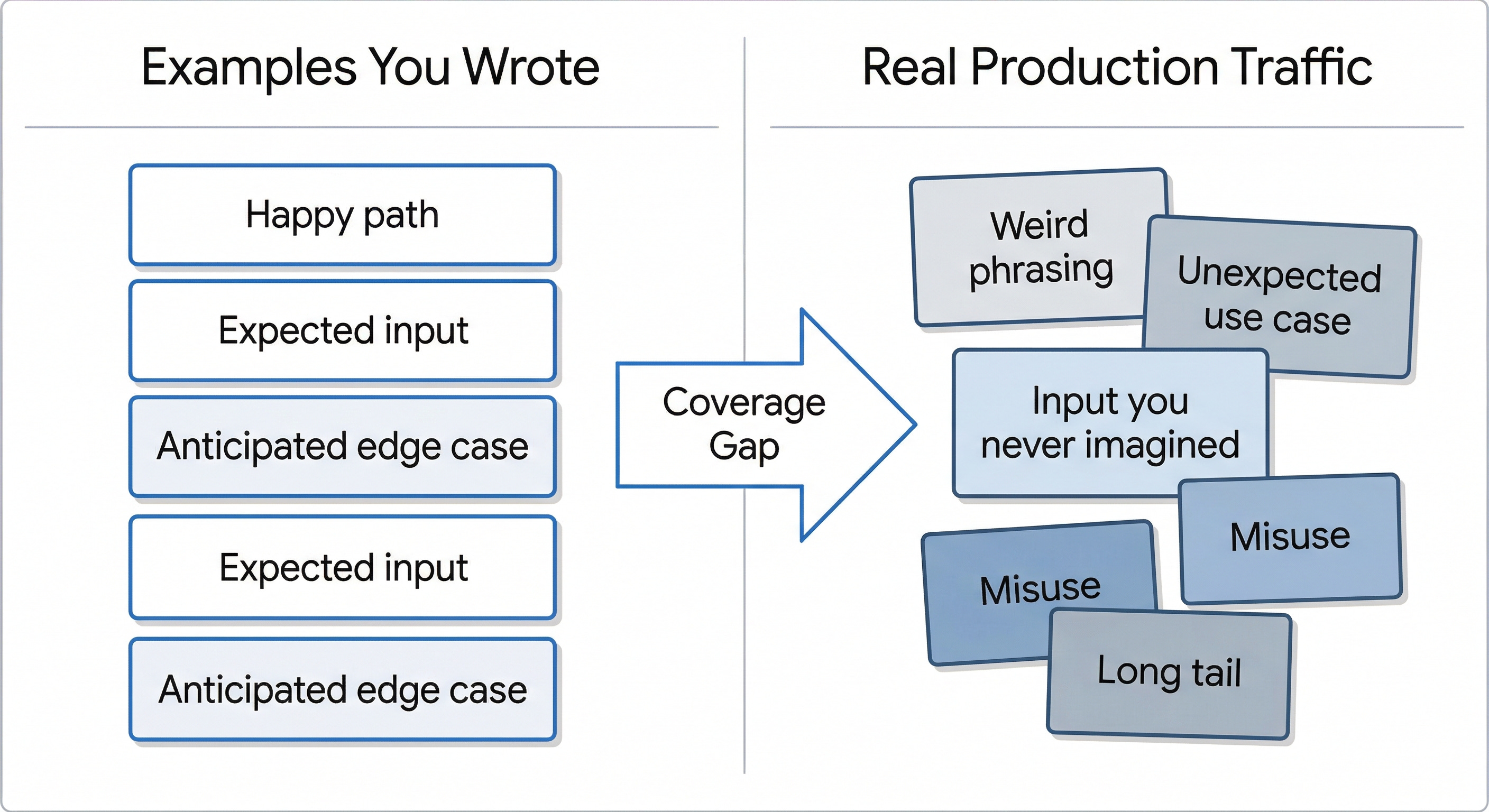

10. Eval coverage

How well does your golden set reflect real user inputs? Most teams have low coverage and don’t realize it: they built the set from happy-path examples and a few obvious edge cases, while production traffic is full of weird inputs and unusual phrasings.

Low coverage means an optimistic eval suite — you’ll pass your tests but fail your users. The fix isn’t writing more examples yourself; it’s sampling from production regularly, reviewing failures, and adding the inputs that exposed weaknesses to your golden set. Coverage is something you build over time.

11. Temperature, top-p, and reproducibility

Temperature controls how random outputs are. Near 0 makes the model nearly deterministic — same input, same output. Higher values make it more creative, sampling from a wider range of probable tokens.

Top-p (nucleus sampling) is a related setting: instead of a randomness dial, it sets a probability cutoff. top-p = 0.9 means the model only considers the top 90% most likely next tokens.

Both directly affect eval results. Run evals at temperature = 1.0 and the same prompt might pass today and fail tomorrow — not because the model changed, but because randomness swung against you. Standard practice: set temperature to 0 during eval runs to lock in determinism. If you need creative variance in production, evaluate at production temperature too — but accept the noisier results.

12. Statistical rigor

Even at temperature = 0, a single eval run isn’t enough. Your golden set is a sample of your input space and has its own variance. One unlucky set of examples can make a good prompt look bad; a lucky one can make a bad prompt look good.

So run many evaluations across different samples. Report the mean and the variance, not just the score. When comparing two prompt versions, ask whether the difference is real or just noise. If your score moves from 4.1 to 4.3, that might be a real improvement — or random fluctuation. Without variance data across many runs you cannot tell. Most teams run once, report the number, and ship; that is how confident regressions get deployed.

How do you score outputs?

You have criteria, a rubric, and test cases. Now: who does the scoring? There are several options, each with different cost/speed/accuracy trade-offs.

13. Human evaluation

The gold standard. A human applies your rubric and gives a score. Slow, expensive, sometimes inconsistent — but the closest thing to ground truth. You cannot use humans for every eval run, but you should not remove them either; everything else (metrics, LLM judges, tests) is just an approximation of human judgement.

Use humans strategically: to build and validate your golden set, to periodically check the accuracy of your automated evals, and to debug when something breaks and you don’t know why.

14. Heuristic / code-based evaluation

The fastest and cheapest type. Simple code checks on structural properties: Is the response valid JSON? Within the character limit? All required fields present? Avoiding banned phrases? Matches a regex?

Heuristics catch structural problems but cannot tell whether a response is helpful, accurate, or well-written. Treat them as your first line of defense — they catch basics before you spend money on expensive evaluations. Necessary, not sufficient.

15. Semantic similarity evaluation

When you have a reference answer, you can measure how close the model’s output is in meaning — not exact wording. Each text gets converted to an embedding (a vector of numbers representing meaning). Texts with similar meanings have vectors that are close together; cosine similarity measures the angle between them — 1.0 means identical meaning, near 0 means unrelated.

Why it matters: string matching is too strict. “API returns a 404 error” and “Endpoint responds with a not found status” mean the same thing in different words. Exact match calls the second one wrong; semantic similarity calls them equivalent.

Limitation: it only measures closeness to a reference. It can’t catch a fluent-but-wrong answer if the wrong answer happens to be semantically near the right one. Use it as a fast, scalable layer — but not alone.

16. Task-specific metrics (BLEU, ROUGE, execution-based)

For some tasks there are purpose-built metrics:

- BLEU (Bilingual Evaluation Understudy) — originally for machine translation. Measures n-gram overlap between generated and reference text. Good when exact phrasing matters.

- ROUGE (Recall-Oriented Understudy for Gisting Evaluation) — designed for summarization. Measures recall: how much of the reference appears in the output. Useful when coverage of key points matters.

- Execution-based — for code generation, the only metric that really matters: does it run, and does it produce the correct output? Run the generated code against test cases.

All three trade off the same way: they measure surface similarity to a reference, not actual quality. Use them where they fit.

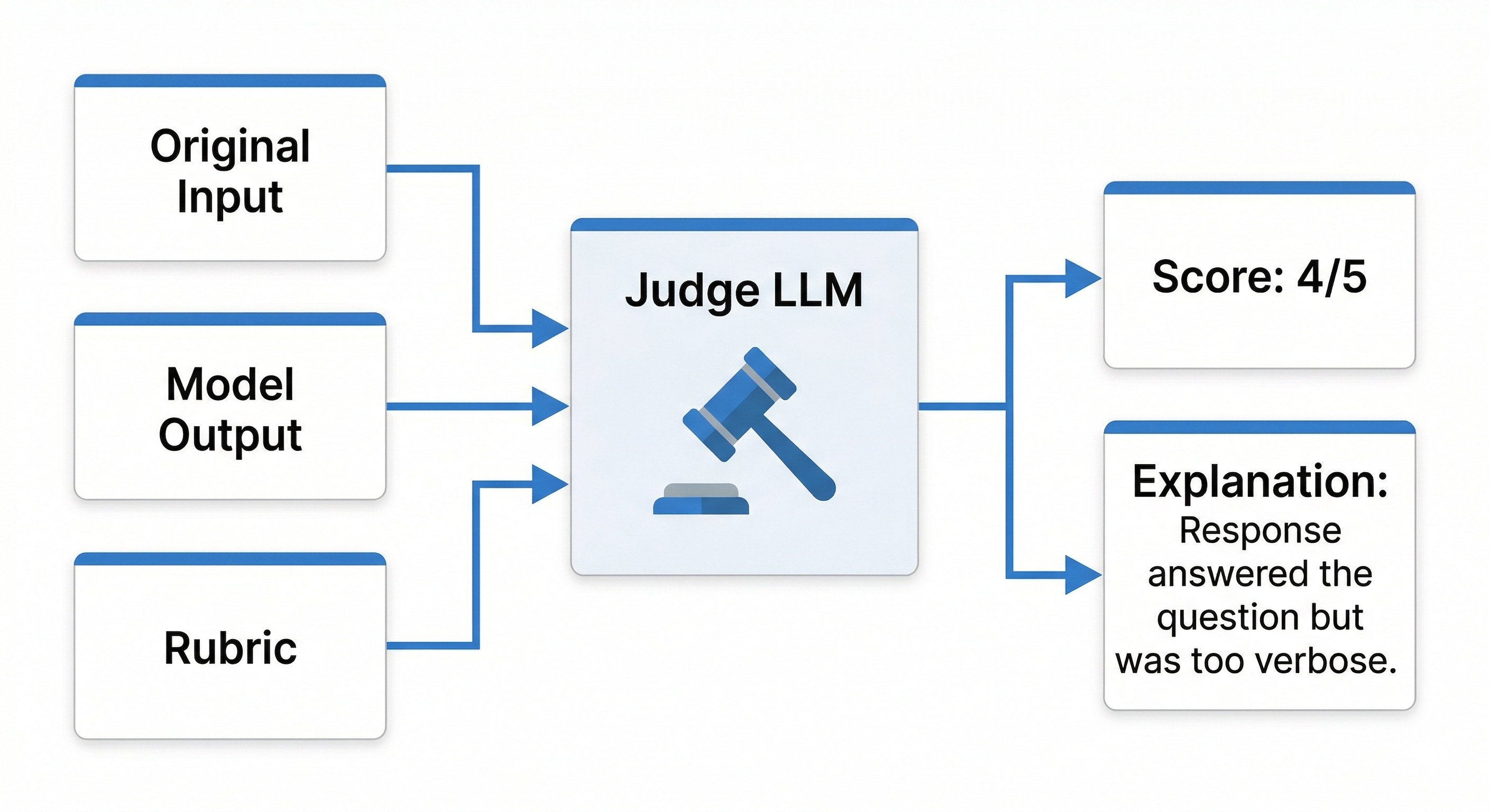

17. LLM as judge

This is what makes evaluation scalable. You give a more capable model three things — the original input, the output to evaluate, and your rubric — and it returns a score plus an explanation.

Think of it like automated load testing for quality: nobody clicks through 10,000 user flows manually, you automate it. LLM-as-judge does the same for rubric scoring — thousands of outputs without thousands of human hours. GPT-5 and Claude Opus are commonly used; they’re capable enough to apply nuanced rubrics, called via API with no custom training.

The catch: judges have their own biases and blind spots. They are an approximation of human judgement, not a replacement.

18. Pointwise vs. pairwise

Two flavors of LLM-as-judge:

- Pointwise — “Score this output 1–5.” Simple, fast, one call per output.

- Pairwise — “Here are two outputs, which is better?” Usually more reliable because comparing is easier than scoring; especially good for asking “is the new prompt better than the old one?”

Pairwise costs roughly 2× because you’re evaluating two outputs. At small scale this doesn’t matter; at production scale it adds up. Industry-standard tiered approach:

- Online (production monitoring) — fast, cheap heuristics or smaller fine-tuned judge models that can run continuously.

- Offline (pre-ship testing) — your best judge model, pointwise and pairwise, only run before deployments.

Treat the powerful judge as a deployment gate, not something hit on every request.

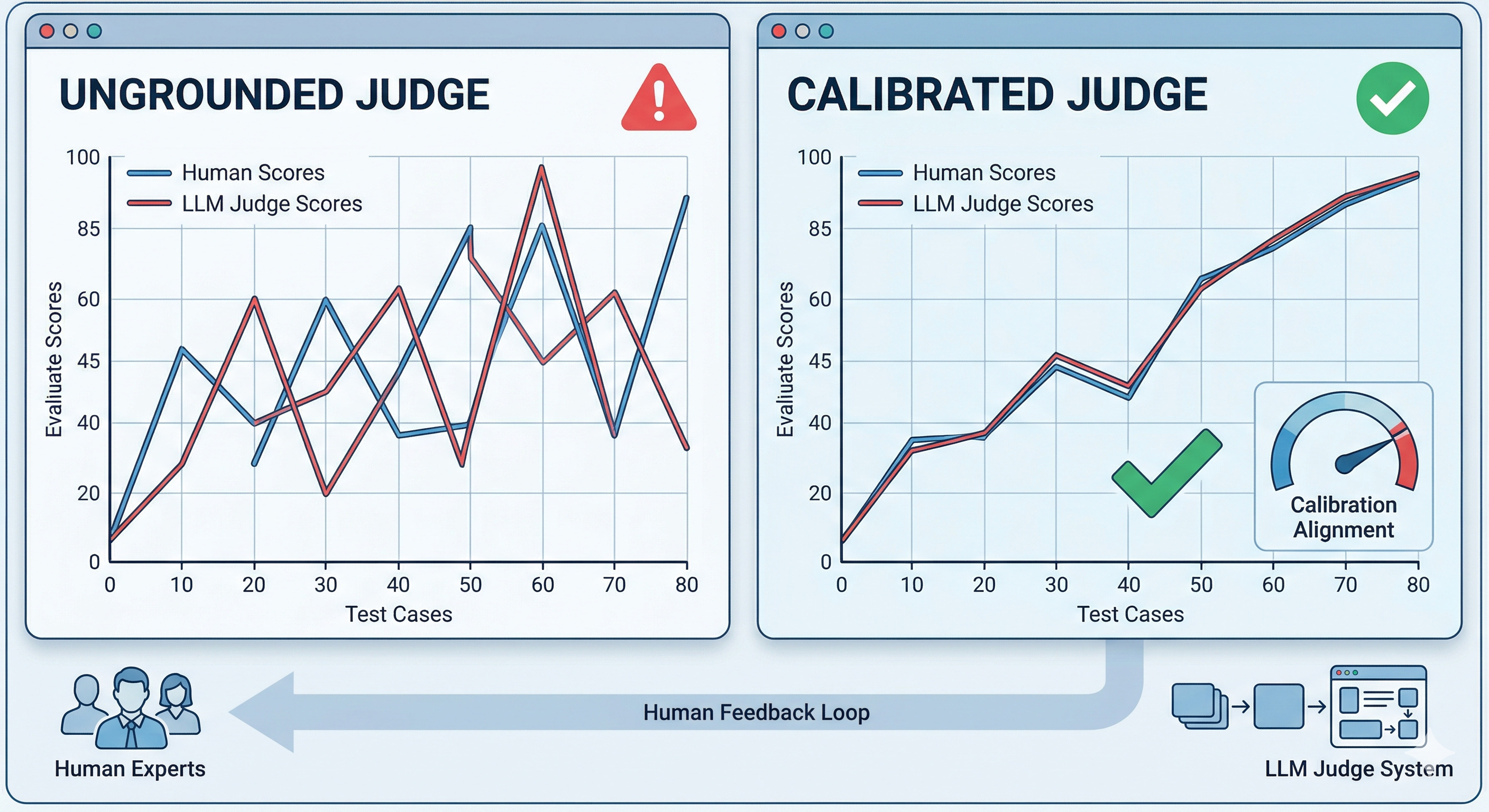

19. Judge calibration

Before you trust an LLM judge, measure how well it matches human judgement.

Take a sample of outputs, have both humans and the judge score them, and check agreement. High agreement means the judge is a good proxy; low agreement means it may be measuring the wrong thing. A poorly calibrated judge gives confidence in incorrect results — calibrate before using, and recalibrate periodically (especially after switching models or prompts).

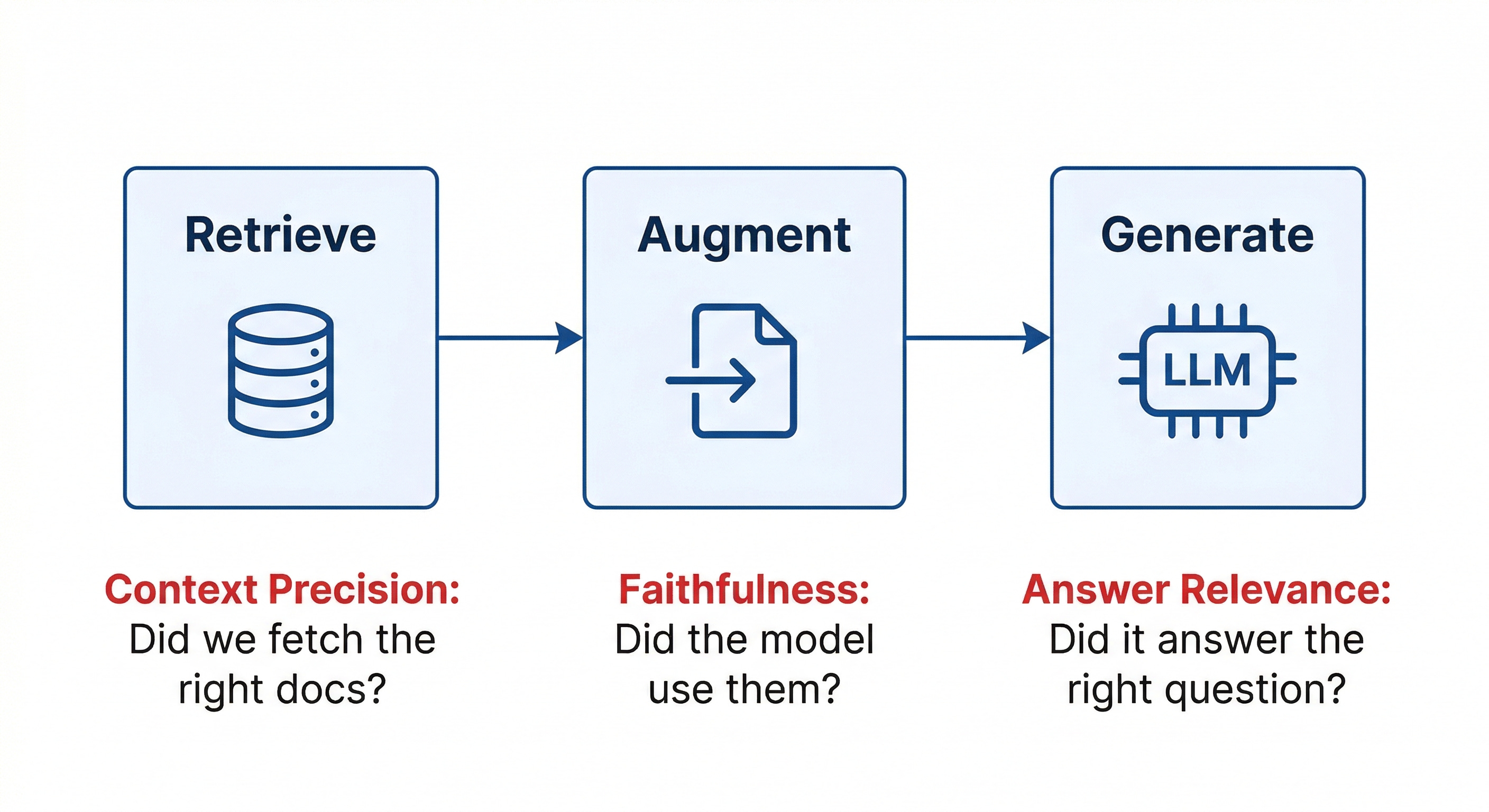

RAG system evaluation

Most LLM applications today aren’t pure “prompt in, response out.” They use RAG (Retrieval-Augmented Generation): fetch relevant documents, inject them into context, then generate a grounded response. RAG reduces hallucinations by grounding the model in real data — but it introduces new failure modes that standard LLM eval misses.

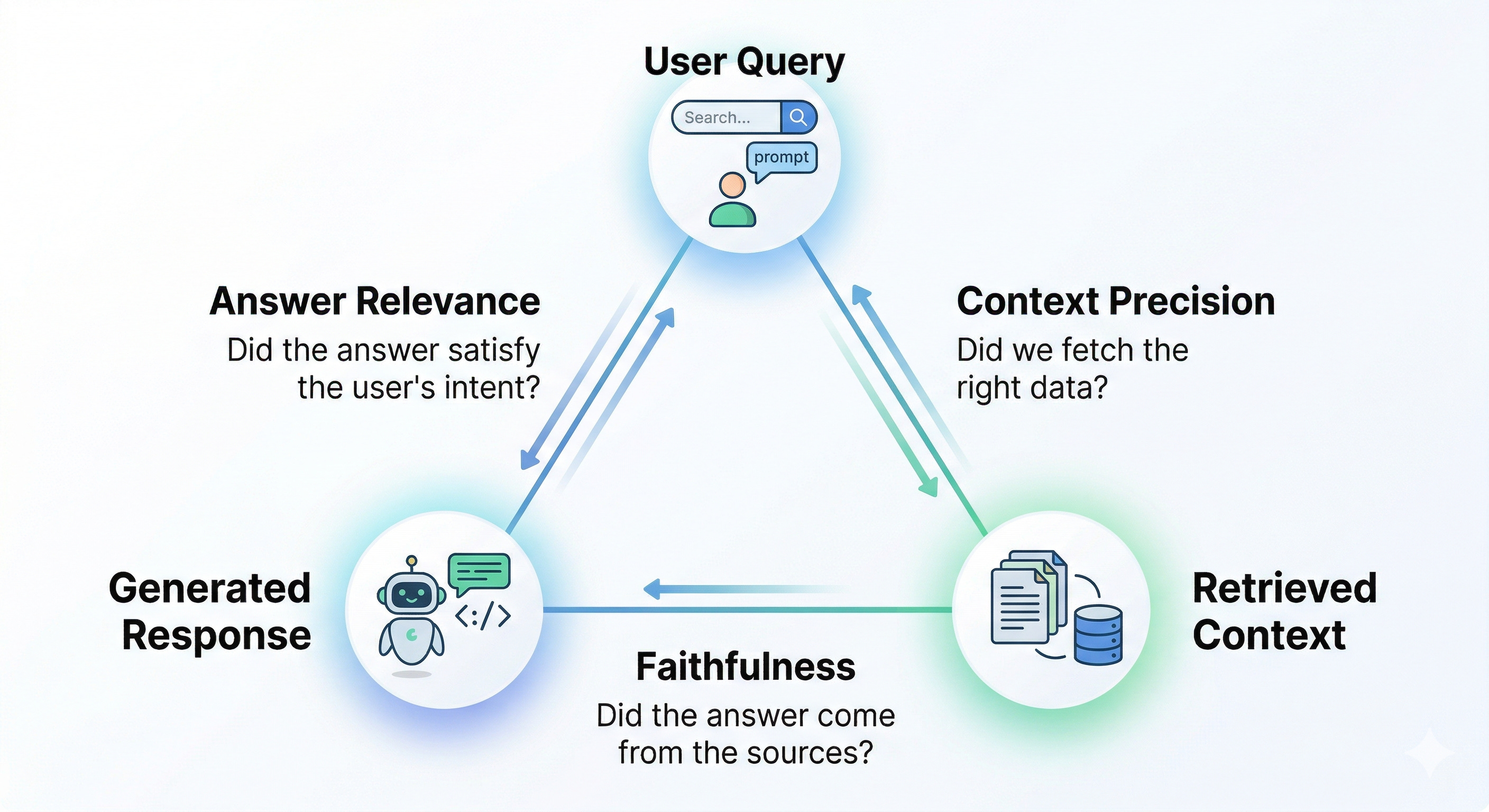

20. The RAG triad

Three dimensions to score a RAG system:

- Faithfulness — Did the answer actually come from the retrieved context? You can retrieve perfect documents and the model can still ignore them. Faithfulness checks whether the response is grounded in the provided sources. A failure here is a confident answer that isn’t actually supported — a hallucination with extra steps.

- Answer relevance — Did the response actually answer the user’s question? A response can be faithful to the context yet miss the point, using the right documents to answer the wrong question. Faithfulness checks if the model stayed in context; answer relevance checks if it matched the user’s intent.

- Context precision — Did retrieval fetch the right documents? Even a perfect generator fails if context is poor. If retrieval brings weak or loosely related docs, the model will guess or refuse. Context precision evaluates the retrieval stage by checking how many retrieved documents were actually relevant.

Think of RAG as three stages — retrieval, augmentation, generation — each able to fail on its own. The triad is your observability layer to localize the failure.

21. RAG-specific failure patterns

- Retrieval returns irrelevant chunks (context-precision failure). Wrong or loosely related documents come back. The model either hallucinates to fill the gap or says it doesn’t know. Fix is in the retrieval layer: embedding quality, chunking strategy, re-ranking.

- Retrieval is correct, but the model ignores it (faithfulness failure). The right documents come back; the model doesn’t use them. Usually a prompting issue — the prompt isn’t forcing the model to rely on the provided context. Fix: strengthen the prompt to keep the model grounded.

- The answer is grounded but doesn’t help the user (answer-relevance failure). Technically correct, supported by context, but doesn’t solve the user’s problem. Usually means your knowledge base is missing the right information. Fix the data, not the prompt.

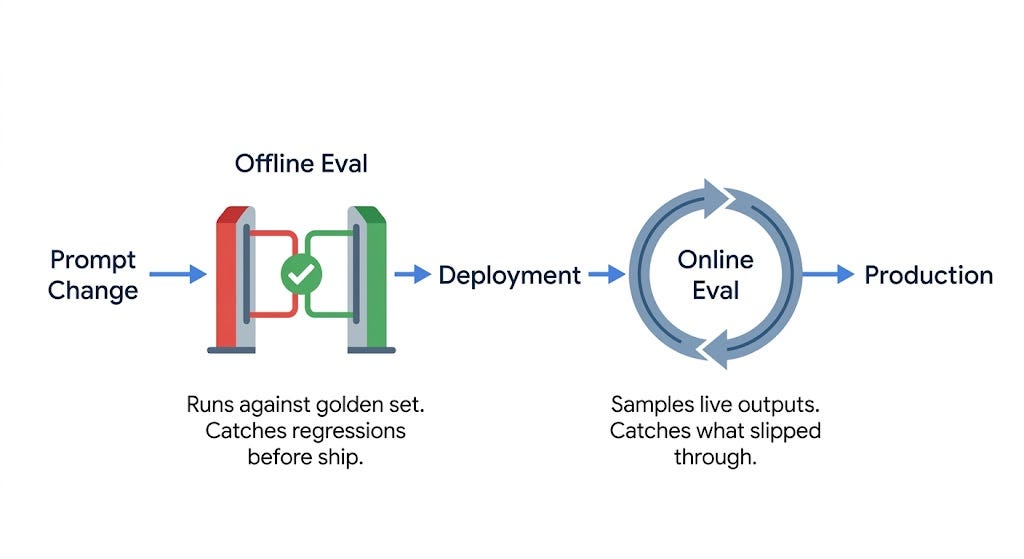

Offline vs. online: building a system

Scoring one output is one problem; building a system that reliably catches failures before and after deployment is another.

22. Offline evaluation

Happens before you ship. You make a change — new prompt, model version, retrieval strategy — and test it against your golden dataset before users see it, comparing scores to the current system.

Think of it as CI for LLMs: a change shouldn’t go live unless it passes evals. This is also where you use your best, most accurate (and expensive) judge model — GPT-5, Claude Opus — because cost matters less when you’re only evaluating a finite golden set, not every user request.

23. Online evaluation

Happens in production. You sample live outputs continuously and score them, catching the issues offline evals miss: edge cases, unusual inputs, failures that only appear at scale. Think of it as monitoring — it surfaces problems before users report them.

The constraint is cost: you can’t run your most expensive judge on every request. Online eval relies on heuristics and smaller, cheaper judge models that can run continuously, trading some accuracy for scale.

Offline and online complement each other: offline catches known issues before deployment, online catches unexpected issues in production.

24. Prompt versioning and regression testing

Your prompt is code; treat it that way. Use version control, keep history, compare diffs. When something breaks you should know exactly what changed and when.

Regression testing means running your eval suite against each prompt version. If the old prompt scored 4.2 and the new one scores 3.8, you’ve caught a regression before it reached users. This sounds simple, but most teams don’t do it — they lack a golden set, a clear rubric, or the infrastructure. It’s the dividing line between teams that iterate confidently and teams that ship and pray.

25. Benchmark-based evaluation

Standard tests for comparing models:

- MMLU (Massive Multitask Language Understanding) — knowledge across math, science, law, medicine, etc. A general-knowledge exam.

- HellaSwag — common-sense reasoning. The model gets the start of a scenario and predicts what happens next. Earlier models struggled badly with this.

- HumanEval — code generation. Given a function signature, write the implementation. Measured by pass@k: how often the model gets it right within k attempts.

Useful leaderboards: the Hugging Face Open LLM Leaderboard for open-weight models, and Chatbot Arena (LMSYS) for human-preference rankings.

26. The benchmark vs. real-world tradeoff

Benchmarks don’t tell you whether a model will work for your specific use case. A model with 90% on MMLU might still struggle with your domain language; one that crushes HumanEval might generate code that doesn’t fit your standards. Benchmarks measure general capability; your application probably needs specific capability. Use benchmarks to narrow down the candidate list, then use your own golden set to make the final decision.

27. Dataset contamination / data leakage

The problem most engineers overlook: dataset contamination happens when evaluation data overlaps with the model’s training data. The model has already seen the answers, so a high benchmark score reflects memorization, not capability.

This happens because both training data and benchmarks come from the internet, and the overlap is often unknown. Over time, popular benchmarks become less reliable — which is why new ones keep being created. Don’t rely on public examples for your evals; build your own datasets from real user queries.

Failure modes (what not to do)

28. Eval anti-patterns

You can have the right tools and still build a bad eval system. The common mistakes:

- Vibe-based evaluation. “I tried it a few times and it looked good.” The most common mistake. Informal spot-checking creates false confidence — it can’t catch edge cases, track quality over time, or scale beyond one person eyeballing a few outputs. Vibes are a starting point, not an eval system.

- The single-sample trap. You run your eval suite once and report the number. But LLMs are non-deterministic — a bad prompt can look good, a good prompt can look bad. Run many samples, aggregate, and report variance, not just averages.

- Goodhart’s Law in disguise. “When a measure becomes a target, it stops being a good measure.” Optimize a metric too aggressively and it stops measuring what you care about — reward confidence and you’ll get confident hallucinations; reward length and you’ll get long, low-quality answers. Metrics are a proxy for quality, not the goal.

- Eval/production mismatch. Your golden set reflects what you expect; real users behave differently — vague, unpredictable, using the system in ways you didn’t anticipate. A high pass rate on unrealistic data doesn’t mean your system works.

- Ignoring tail failures. A 92% pass rate sounds great. But what about the 8%? LLM failures are often catastrophic, not graceful — one harmful or wrong answer can matter more than hundreds of right ones. Always review failure cases, not just averages. The worst outputs tell you the most.

Decision framework

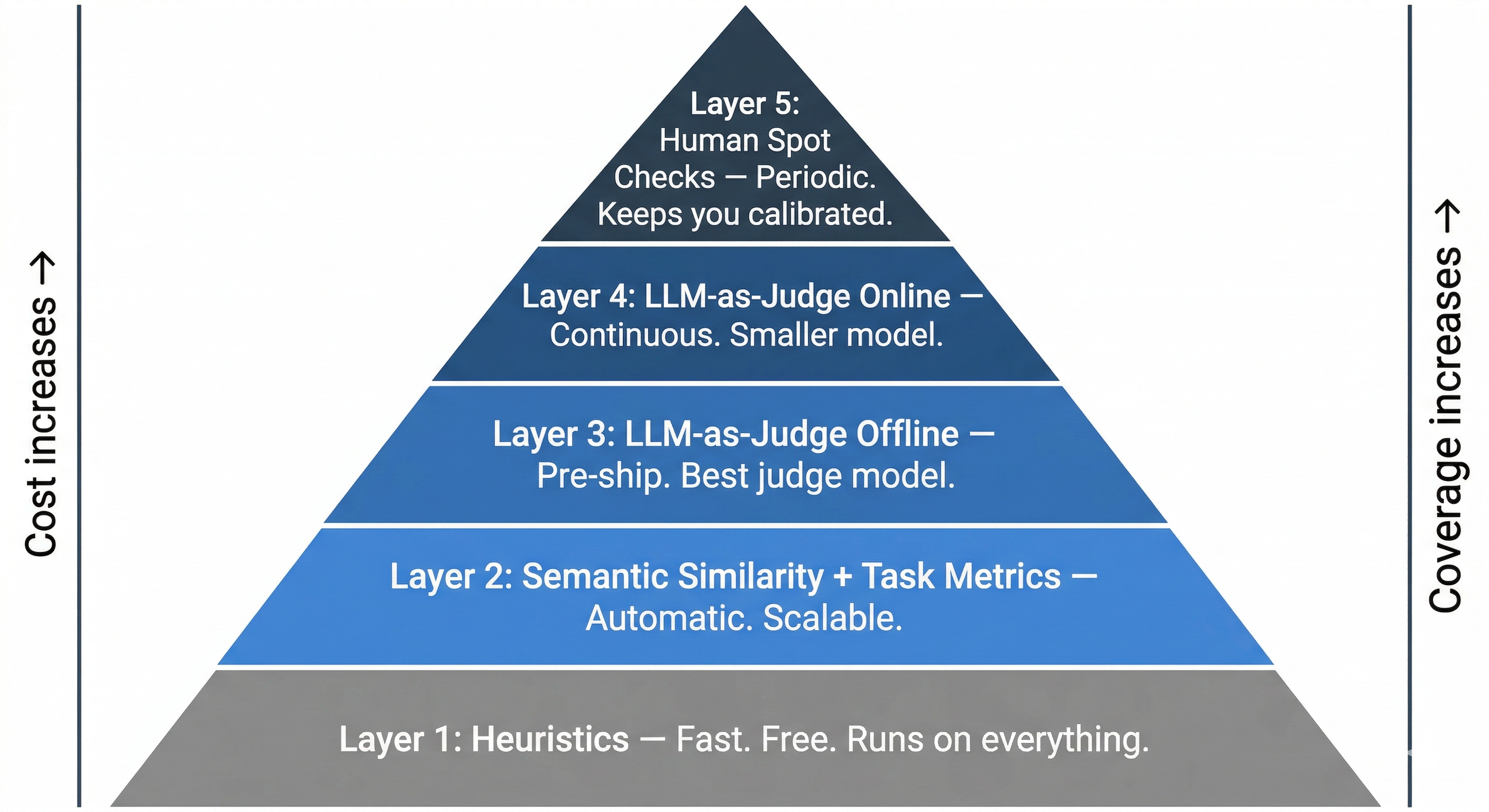

There’s no single tool that solves LLM evaluation. What works is a layered system, where each layer catches problems the previous one missed.

29. The eval stack

- Layer 1 — Heuristics. Fast, deterministic, cheap. Run on every output. Catches structural issues: bad format, missing fields, banned content.

- Layer 2 — Semantic similarity & task-specific metrics. Run automatically against reference answers to catch meaning-level failures without LLM-judge cost.

- Layer 3 — LLM-as-judge (offline). Runs before deployment on the golden set with your best judge model. Catches quality regressions before users see them.

- Layer 4 — LLM-as-judge (online, smaller model). Runs in production on a sample of live outputs with a cheaper judge. Catches scale failures the offline eval missed.

- Layer 5 — Human spot checks. Periodic. Keep you calibrated, verify automated evals still match human judgement, and feed new failure cases back into the golden set.

Each layer has a different cost profile and catches a different failure type. You eventually want all five — but you don’t need them all on day one.

A 3-step MVP

- Build a golden set of ~50 examples. Don’t write all of them yourself. Use real user queries if you have any; otherwise, ~30 representative examples plus ~20 edge cases (normal cases, adversarial inputs, weird phrasings users might try). This becomes the foundation of your eval system.

- Add one deterministic heuristic. Pick the single most important structural requirement — length, format, a required field, a banned phrase — and write a code check for it. Quick to build, catches more than people expect.

- Add one LLM-judge prompt. Turn your rubric into a judge prompt, use a strong model to score outputs on one important quality dimension, and run it on your golden set. Read the scores and the explanations carefully. You will find something surprising — that’s the point.

Everything else builds on top of this MVP: pairwise comparisons, online monitoring, RAG scoring, regression testing.

Closing thoughts

Evaluation isn’t something you do at the end — it’s what makes iteration possible. Without it you’re not doing LLM engineering; you’re guessing and shipping changes, then learning from users that they didn’t work.

Traditional software engineering learned this lesson the hard way: CI, automated tests, production monitoring aren’t optional — they’re what make fast, reliable development possible. LLM systems need the same discipline. The difference is that the outputs are non-deterministic and hard to measure. Now you have the vocabulary to deal with that. Start simple, build your eval stack step by step, and over time you’ll know whether a change improved quality before users ever see it.

Footnote — variance: how much your results change when you repeat the same evaluation. It tells you how spread out or inconsistent your scores are.

Footnote — quick recap: Criteria = what you care about (accuracy, clarity, helpfulness). Rubric = how you score those criteria consistently (e.g., 1–5 with definitions). Test cases = specific inputs/examples used to evaluate the model.

Author

Anshuman Mishra & Neo Kim

Continued reading

Keep your momentum

MKT1 Newsletter

100 B2B Startups, 100+ Stats, and 14 Graphs on Web, Social, and Content

This is Part 2 of MKT1's three-part State of B2B Marketing Report. Where Part 1 looked at teams and leadership , Part 2 turns to what marketing teams are actually doing — what their websites look like, how they use social, and what "content fuel" they're producing. Emily Kramer u

Apr 28 · 10m

Lenny's Newsletter (Lenny's Podcast)

Why Half of Product Managers Are in Trouble — Nikhyl Singhal on the AI Reinvention Threshold

Nikhyl Singhal is a serial founder and a former senior product executive at Meta, Google, and Credit Karma . Today he runs The Skip ( skip.show (https://skip.show)), a community for senior product leaders, plus offshoots like Skip Community , Skip Coach , and Skip.help . Lenny de

Apr 27 · 7m

The AI Corner

The AI Agent That Thinks Like Jensen Huang, Elon Musk, and Dario Amodei

Dominguez opens with a claim that is easy to skim past but worth stopping on: the difference between elite founders and everyone else is not raw IQ or speed — it is that each of them has internalized a repeatable mental procedure they run on every important decision. The procedur

Apr 27 · 6m