Engineering Leadership

Code Review is the New Bottleneck For Engineering Teams

Gregor Ojstersek

Apr 13, 2026

Code Review is the New Bottleneck For Engineering Teams

Source: Engineering Leadership · Author: Gregor Ojstersek · Date: 2026-04-13 · Original article

The core idea

AI lets engineers write code faster than ever, so the volume of pull requests (PRs — proposed code changes waiting to be merged into the main branch) coming into a team has exploded. But the review side hasn't sped up at all. Reviewing is still slow, manual, and mentally taxing. The result: teams now sit on growing piles of unreviewed PRs, and the bottleneck has shifted from "how fast can we write code" to "how fast can we review it." If we don't evolve the review process, we lose most of the productivity AI promised.

Why fast PR reviews have always mattered

Gregor has long tracked PR Cycle Time — the elapsed time from opening a PR to merging it — as a signal of a high-performing team. It's not a perfect proxy, but in his experience the correlation has been strong.

The reasoning is about how human attention works. Every open PR is a chunk of context the author has to keep loaded in their head: why a particular function was structured a certain way, which edge cases they were worried about, what trade-off they made and why.

Concrete walk-through:

- An engineer opens PR #1 and waits for review.

- While waiting, they start PR #2. Now they're holding two stacks of context.

- PR #1 still isn't reviewed, so they pick up PR #3. Three stacks.

- By now the why behind PR #1 is fading. When the reviewer finally asks "why did you do this?", the engineer half-remembers. Edge cases that were once obvious get forgotten. Bugs slip into production.

The slower reviews get, the more this context-switching tax compounds. Fast reviews aren't just nice — they protect quality.

Why engineers don't want to review

Most engineers (and engineering leaders) prefer building over reviewing. It's worth understanding why, because it explains why review queues pile up.

Building feels good because:

- You're solving a problem and the code visibly changes behavior — a clear "I did a thing" moment.

- You make visible progress, which triggers dopamine.

- Closing a task and seeing your change live in production is motivating.

Reviewing feels draining because:

- You have to load someone else's full context into your head — what they were trying to do, why they chose this approach, what the surrounding code does. That's heavy mental work, especially when you're reviewing many different PRs in a day.

- You may need to ask questions or hunt down extra context from other people or docs.

- You're on the hook — as a reviewer you carry partial responsibility if something breaks.

- You're expected to find issues and give feedback, which can be socially uncomfortable, especially if the author disagrees.

Important caveat: the more senior you become (Staff+ engineer, Architect, EM and above), the more reviewing — of code, docs, specs, decisions — is the job. You don't get to opt out.

Why teams have always struggled with PR Cycle Time

Even before AI, mandatory PR reviews plus QA testing meant merges took time. Things got even slower when:

- The change was bigger than usual.

- The work touched a complex or legacy area of the codebase.

- The requirements were ambiguous.

On top of that, every engineer has their own roadmap, priorities, and deep work. Reviewing other people's PRs is almost never their primary focus. And most companies wisely don't treat "PRs reviewed" as a personal KPI — making it a metric would distort behavior — but the side effect is that reviews compete with everything else for attention and usually lose.

Why this just got dramatically worse: AI is generating PRs faster than humans can read them

Two main sources are flooding the queue:

1. Agentic AI PRs. Tools like Devin, OpenClaw, and similar can be given their own GitHub account. You hand them a task and they open a PR directly. The catch: you weren't in the loop while the code was written, so as a reviewer you're parachuting in with zero contextual memory. You have to read every line cold. That takes serious mental energy.

2. AI-assisted engineering. Tools like Cursor and Claude Code where a human engineer drives but AI helps generate the code. Gregor considers this the better and more common pattern today: the human keeps full context of the change, and the PR is opened on their behalf — but the speed at which PRs get produced still goes way up.

Both pathways crank up PR throughput. Sounds great — until you remember nobody sped up the reviewing side.

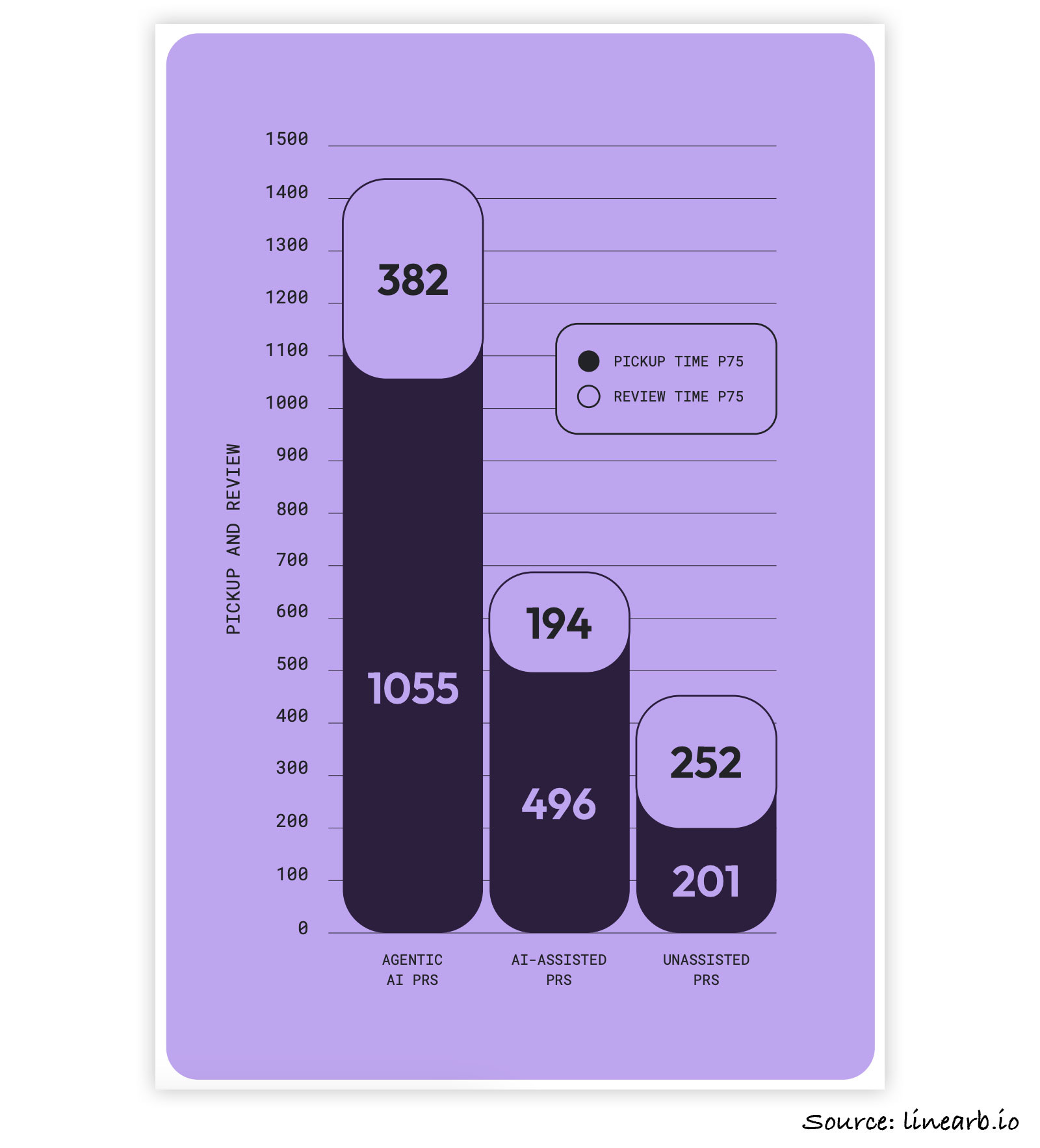

The 2026 LinearB Software Engineering Benchmarks Report (data from teams across 42 countries) puts numbers on it:

- Agentic AI PRs have a PR Pickup Time 5.3× longer than unassisted PRs.

- AI-assisted PRs have a PR Pickup Time 2.47× longer than unassisted PRs.

(PR Pickup Time = how long a PR sits open before a reviewer first looks at it.)

The headline finding: engineers actively dread reviewing AI-generated code and procrastinate on it. Combine "more PRs than ever" with "reviewers stalling on the AI ones," and you have a textbook bottleneck.

A nuance: codebase size and complexity matter

AI's productivity boost isn't uniform. The pattern Gregor consistently hears:

- Greenfield projects (brand new codebases) get the biggest boost. You can structure things in a way LLMs handle well, so AI-generated code is much more accurate.

- Old, complex, or architecturally tangled codebases get the smallest boost. The more architectural change required, the less helpful AI is.

This is partly why opinionated frameworks like Ruby on Rails and Angular are gaining popularity again — they enforce strong conventions and clear best practices, which gives LLMs a tight, well-defined target. Less ambiguity for the AI = more accurate generated code.

So if you're on a long-lived complex system, expect a smaller PR surge — but the PRs you do get may be even harder to review.

Concrete tactics to unblock reviews

The mental anchor: "How can I make my reviewer's job easier?" or "What kind of PR would I want to review?" Then do exactly that.

1. Add solid test cases for your change. Tests serve double duty: they raise your own confidence that the code does what it should, and they give the reviewer a quick way to trust the change. Gregor recommends Addy Osmani's Agent Skills repo, specifically the test-driven-development skill, to help generate good tests from your diff.

2. Run AI review on your code before opening the PR. Every back-and-forth comment cycle on a PR costs both author and reviewer time and mental energy. A pre-flight AI review catches the obvious stuff so the human reviewer never has to raise it. Use any AI code-review tool, or a custom prompt, or Addy Osmani's code-review-and-quality skill.

3. Pre-comment on the tricky parts when you open the PR. If you can predict where reviewers will be confused, drop your own inline comment explaining why the code looks the way it does, before assigning reviewers. This kills a whole round of "wait, why did you do this?" → "because X" → "ah ok" exchanges.

4. Wire an AI code reviewer into CI to auto-review every PR on open. This is the change Gregor calls a "no-brainer." When a human reviewer arrives, the AI has already done a first pass — so they're not starting from a blank page. He expects this single change to drastically improve PR Pickup Time, because the painful "cold start" of opening an unreviewed PR is gone.

Balancing AI reviewers with human reviewers

As human reviews keep slipping, more teams are leaning on AI reviewers. Two real-world examples Gregor cites:

-

Andrew Churchill, CTO at Weave, runs four different AI code-review tools in parallel. Their pattern: when a new engineer joins, do thorough human reviews to teach them the codebase and conventions. Once that engineer has the context, most of their later PRs are reviewed primarily by AI. Big productivity gains; team moves much faster.

-

Raphaël Hoogvliets, Principal Engineer at Eneco, configured a coding agent with the prompt: "You are a staff engineer. Review this PR. If it meets production standards, approve it. If it doesn't, request changes with specific reasoning." He went further and based the agent's skill configuration on the CV of an actual strong staff engineer, encoding their judgment and standards into the agent. It worked well, and made the human-review bottleneck visible by contrast.

Gregor believes this is where the industry is heading by necessity: human review speed simply can't keep up with AI-driven generation speed. The upside if you get the guardrails right is enormous — imagine collapsing PR Cycle Time from 2 days to 20 minutes.

But there are real constraints:

- SOC2 compliance. Companies that need SOC2 must have at least one human engineer (not the author) sign off on every PR. AI alone won't cut it for them.

- Complex systems. As noted earlier, the more complex your codebase, the less useful AI is — including for reviewing. Productivity gains shrink in legacy or architecturally heavy environments.

- Speed vs. quality trade-off. Gregor's take: startups should bias toward speed (AI-heavy review); more established companies should bias toward quality (more human review). And every industry is different — what you're building and who you're building it for changes the right mix.

Last words

Code generation has gotten radically faster. If the review process doesn't evolve to match, reviews will throttle the entire team and you'll never realize AI's productivity benefits.

Don't try to overhaul everything at once. Start with one change — Gregor's recommended starting move is adding an AI code reviewer that runs automatically when a PR is opened. That alone removes a huge chunk of the friction and is the easiest win to ship.

Author

Gregor Ojstersek

Continued reading

Keep your momentum

MKT1 Newsletter

100 B2B Startups, 100+ Stats, and 14 Graphs on Web, Social, and Content

This is Part 2 of MKT1's three-part State of B2B Marketing Report. Where Part 1 looked at teams and leadership , Part 2 turns to what marketing teams are actually doing — what their websites look like, how they use social, and what "content fuel" they're producing. Emily Kramer u

Apr 28 · 10m

Lenny's Newsletter (Lenny's Podcast)

Why Half of Product Managers Are in Trouble — Nikhyl Singhal on the AI Reinvention Threshold

Nikhyl Singhal is a serial founder and a former senior product executive at Meta, Google, and Credit Karma . Today he runs The Skip ( skip.show (https://skip.show)), a community for senior product leaders, plus offshoots like Skip Community , Skip Coach , and Skip.help . Lenny de

Apr 27 · 7m

The AI Corner

The AI Agent That Thinks Like Jensen Huang, Elon Musk, and Dario Amodei

Dominguez opens with a claim that is easy to skim past but worth stopping on: the difference between elite founders and everyone else is not raw IQ or speed — it is that each of them has internalized a repeatable mental procedure they run on every important decision. The procedur

Apr 27 · 6m