Engineering Leadership

How an AI-Native Startup From SF Works and Builds Its Product

Gregor Ojstersek

Apr 20, 2026

How an AI-Native Startup From SF Works and Builds Its Product

Source: Engineering Leadership · Author: Gregor Ojstersek · Date: 2026-04-20 · Original article

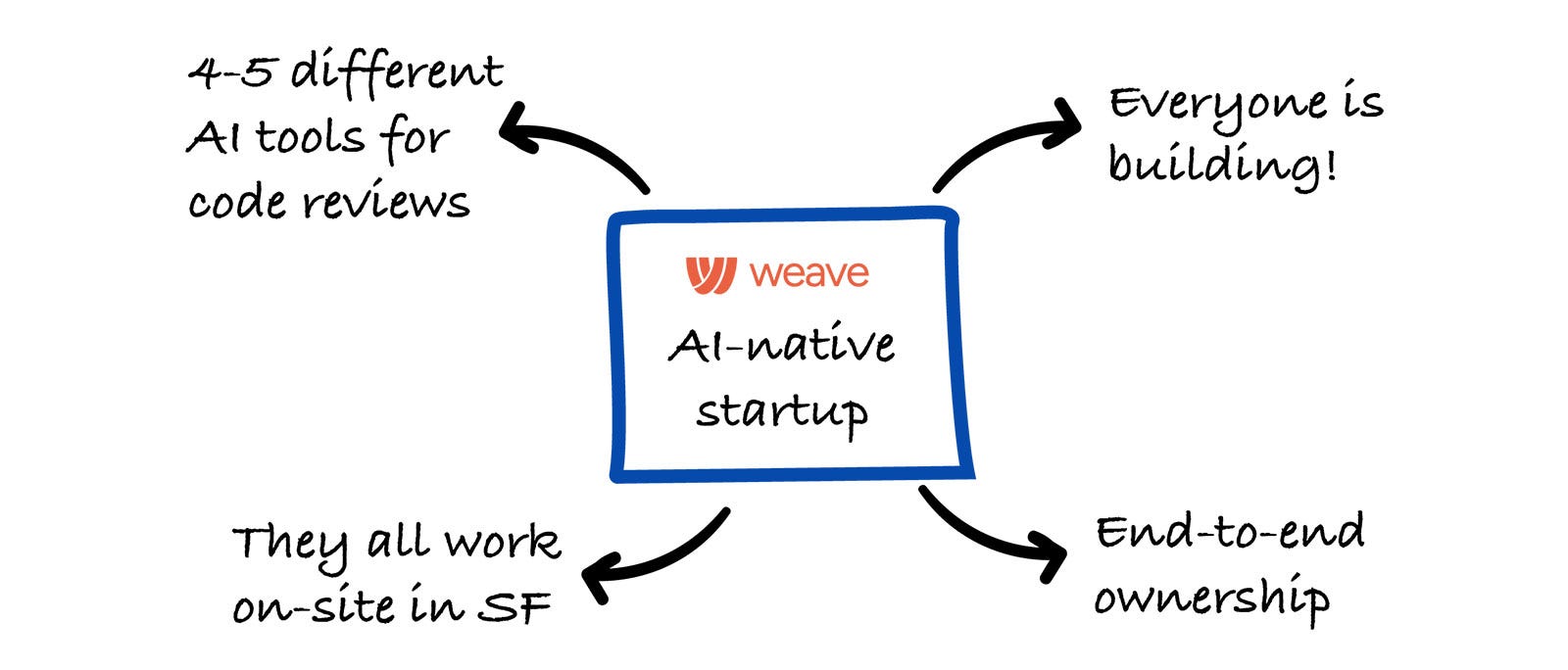

Gregor Ojstersek visited San Francisco and spent time inside Weave — a seed-stage, AI-native startup — talking with co-founders Andrew Churchill (CTO) and Adam Cohen (CEO), plus Brennan Lupyrypa (Founding GTM Engineer). This piece is a window into how a modern AI-first engineering team actually works day-to-day: their tools, their feature process, their reviewing culture, and where AI helps vs. where humans still drive.

What Weave is building

Weave is an engineering intelligence platform for the AI era: it analyzes what engineers do, how much work gets shipped, the quality of that work, and how effectively AI is being used — then turns those signals into insights teams can act on.

- Team size: 10 people, growing to 13–14 soon. All upcoming hires are engineers.

- Co-located: in-person in SF, typically 5+ days a week.

- Stack: React + TypeScript on the frontend, Go on the backend, Python for ML and agent work.

- Advanced area: they train their own models to analyze engineering work — building on top of open-source LLMs and applying fine-tuning and reinforcement learning.

Their office has a "hacker" vibe — multiple startups share the space, so it feels like a big group of builders all hacking together.

The tools they actually use

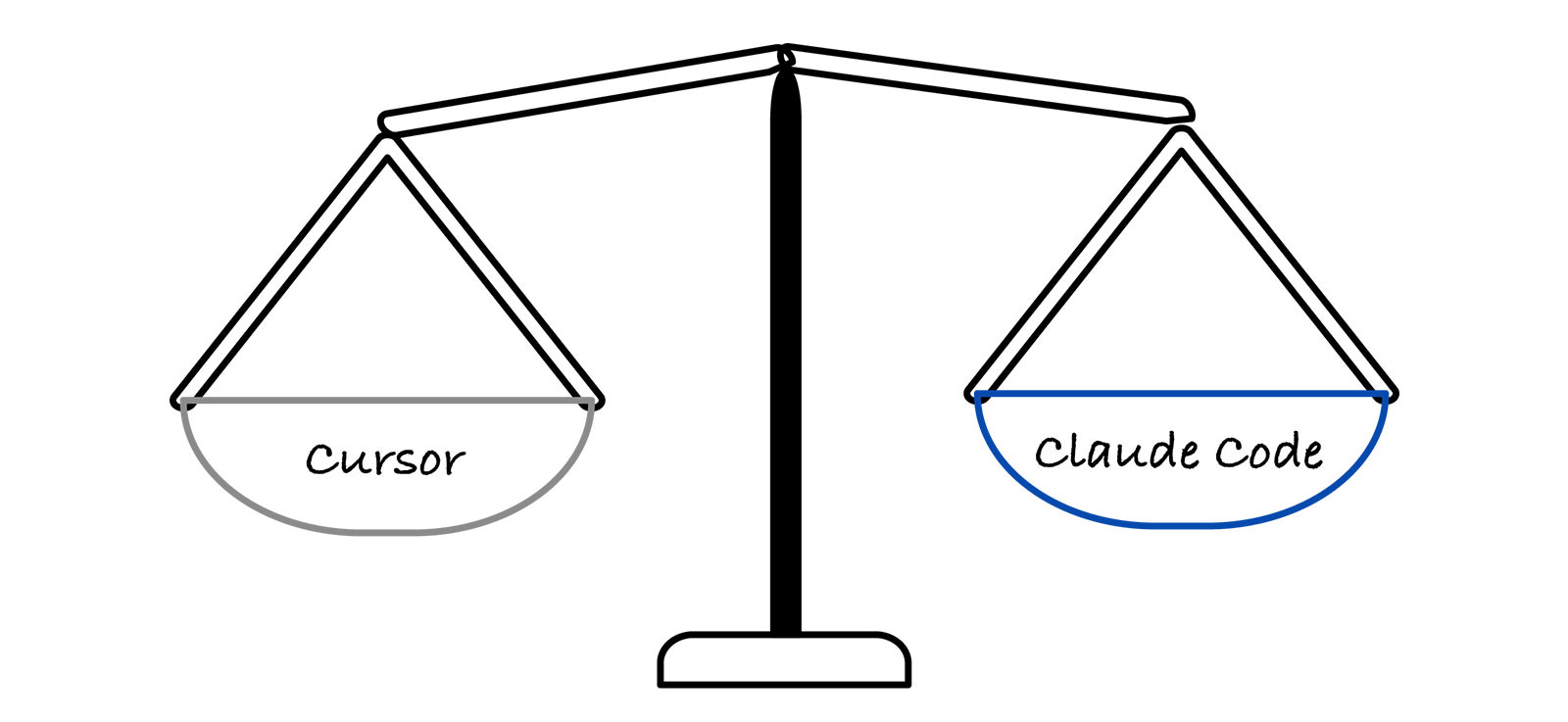

AI coding tools — Cursor and Claude Code

The day-to-day development workflow is split between Cursor and Claude Code. It's a personal preference, and engineers switch over time. Both are considered great, so instead of standardizing on one, the team focuses on building shared skills and context that work across both tools.

They have not built their own coding agents or background agents — at this stage, that's not the best use of time.

Two self-built internal tools

Instead of building agents, they invested in two high-leverage internal tools:

- A CLI to their full local development environment. Engineers can run migrations, connect to staging, and similar tasks on their own without pinging anyone. Removes friction from the inner loop.

- An internal admin dashboard built directly inside their app. They started with Retool but moved away from it and built their own. Now both technical and non-technical teammates can pull data, troubleshoot issues, toggle feature flags, or enable paywalls without involving an engineer. This dashboard turned out to be a major productivity boost — it enables self-service across the team, speeds up debugging, and is easy to extend whenever new needs arise.

PM and docs

- Linear is the main backlog/prioritization tool, but it only holds about 60% of their work. Speed is a core priority — if something can be done immediately, they just do it without making a ticket. Linear is reserved for work not handled right away, and they tie customer requests to those tickets. They lean on integrations heavily (e.g., turning Slack discussions into tickets without opening the Linear UI). It's intentionally lightweight, not a full task-tracking system.

- Notion is used, but not much for engineering. They prefer keeping documentation close to the code rather than in a separate tool — easier to maintain, and more accessible to their AI agents (which is a key reason: agents can pick up context from in-repo docs more reliably).

How they build a feature from 0 → 1

For complex work, they kick off with a quick 30-minute discussion: gather around a whiteboard, sketch ideas, align on the MVP, decide who the first users are, and how they'll validate.

A few principles drive what happens next:

- Single-owner projects. Because the team is small, most projects are owned by one person. When multiple people are involved, the work is split into parallel, loosely-connected streams — not tightly coordinated efforts.

- Ship early behind flags. Features go live behind feature flags and are dogfooded internally first (they're building for engineers and use the product themselves daily, so internal testing is a meaningful signal).

- Customer-driven? Share rough. If a customer requested it, they'll build a rough version and share it with that customer, then iterate quickly until it's broadly releasable.

- No heavy specs/PRDs. They rarely write detailed product requirement docs. They rely on clear direction, high ownership, and fast iteration instead.

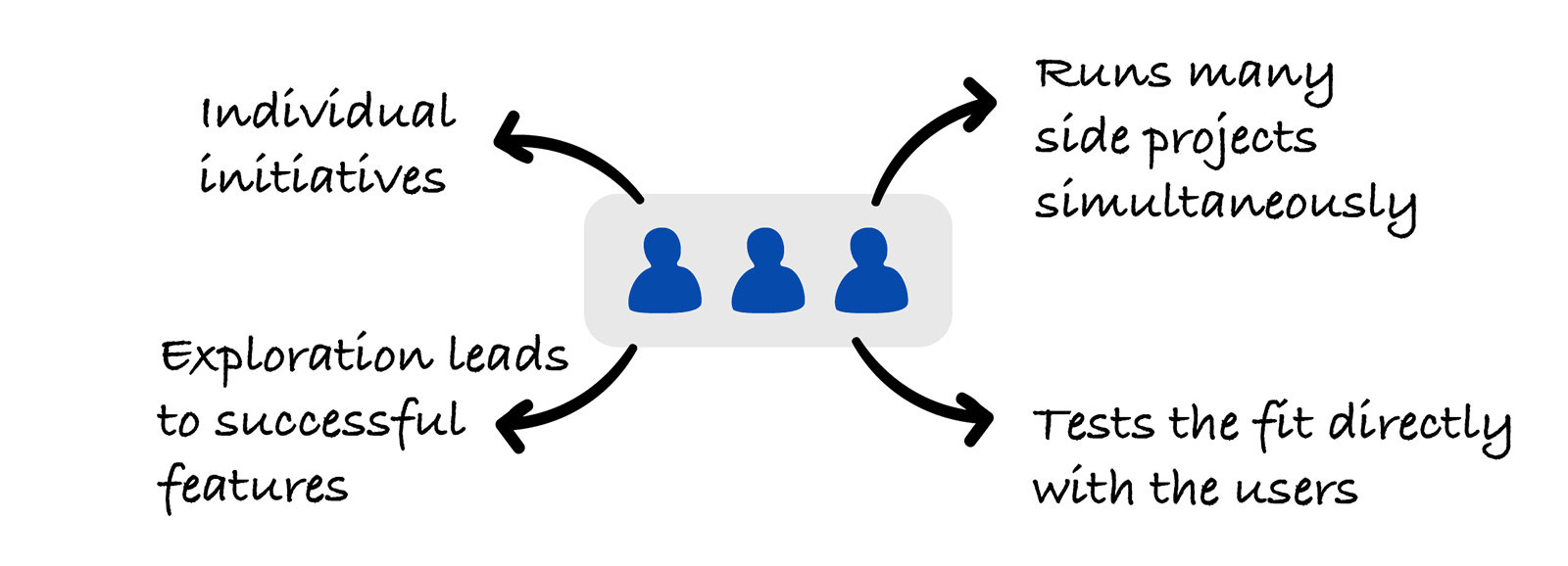

Engineers are empowered to ship things they believe in

Many features start as small side projects rather than formal initiatives. The internal admin dashboard itself wasn't planned as a project — it grew gradually as a "side quest", with agents running in the background and occasional check-ins between main tasks.

Andrew gave another example: a custom dashboard feature emerged because one engineer got really interested in it, built it in parallel with their main work, tested with a few users, and then rolled it out broadly. The pattern — important features grow organically out of engineer-driven exploration — is common at Weave.

How they decide what to build vs. not

Weekly prioritization session: review everything that came in, decide what matters most, and make sure the highest-priority items match what engineers are actually working on. They also use this slot (plus occasional longer discussions) to set broader direction — key bets, where the product is headed, what customers are signaling.

Compared to traditional workflows where alignment happens monthly or quarterly, Weave aligns weekly. The reason is mechanical: in a pre-AI world, planning and execution were expensive, so you couldn't afford to re-plan often. With AI tools speeding execution way up, planning has to keep pace — otherwise the plans are out of date by the time they're acted on.

This works because engineers aren't blocking everything else to chase one idea. They explore multiple ideas in parallel, so new bets can be tested without disrupting core priorities.

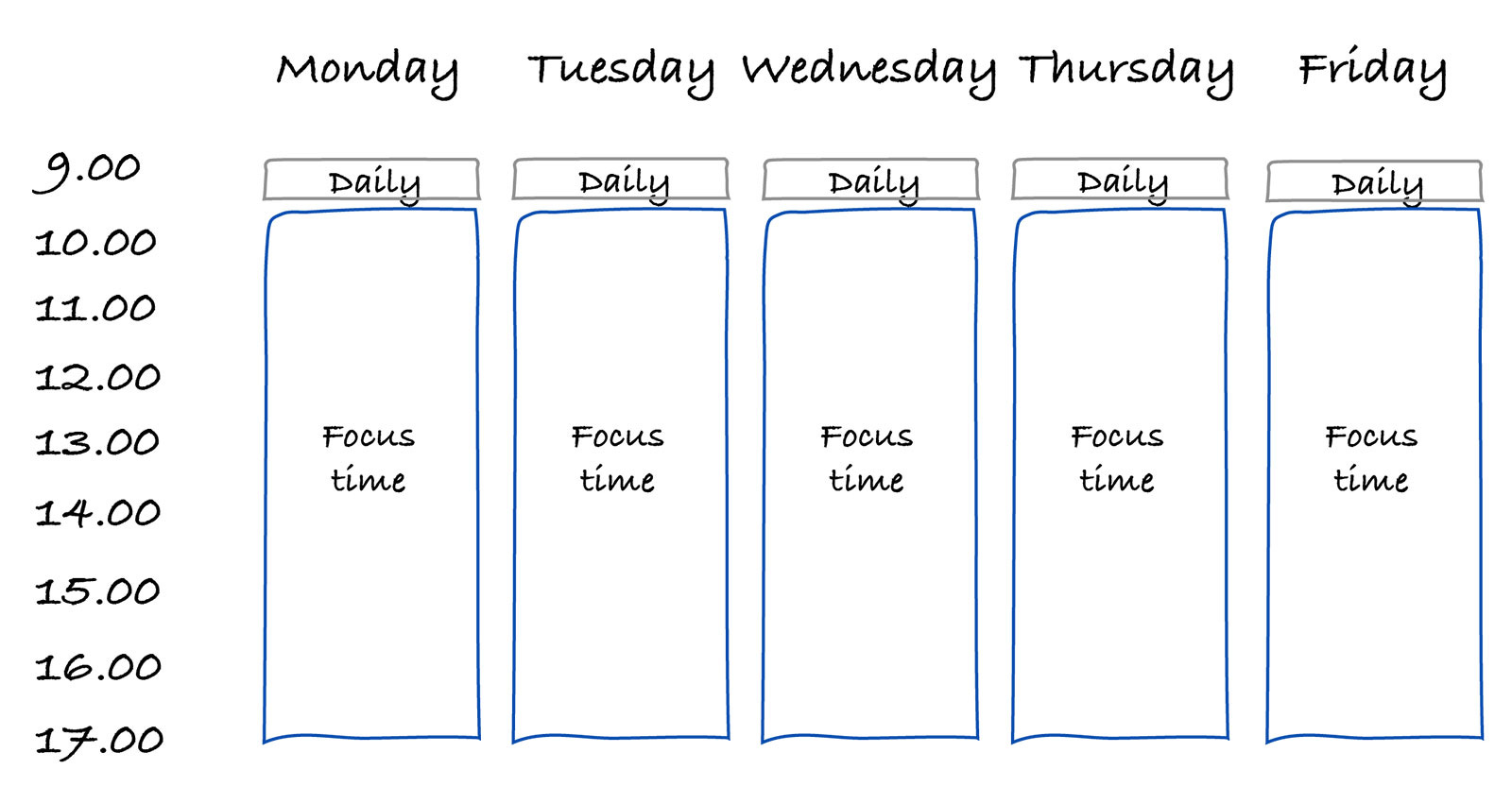

They are aggressively anti-meetings

Long fragmented days kill focus. So:

- The only standing meeting is a daily 9 AM stand-up with the whole company.

- Project kickoffs are usually 30 minutes after stand-up so they don't break up the rest of the focus block.

Most collaboration is informal. Engineers sit in the same area, so they swivel chairs and discuss in real time instead of scheduling a meeting. They genuinely prefer in-office for the energy and connection while tackling hard problems together.

Planning horizon: not more than a month ahead

They have strategic pillars for the next 3–6 months and a vision for 1–5 years, but they don't plan projects in detail beyond a month. The reasoning is honest: things change too quickly. Agent observability, for example — a meaningful area for them today — wasn't even on the radar a couple months ago. Long-term detailed plans would just become outdated. So they stay flexible and adapt as new opportunities surface.

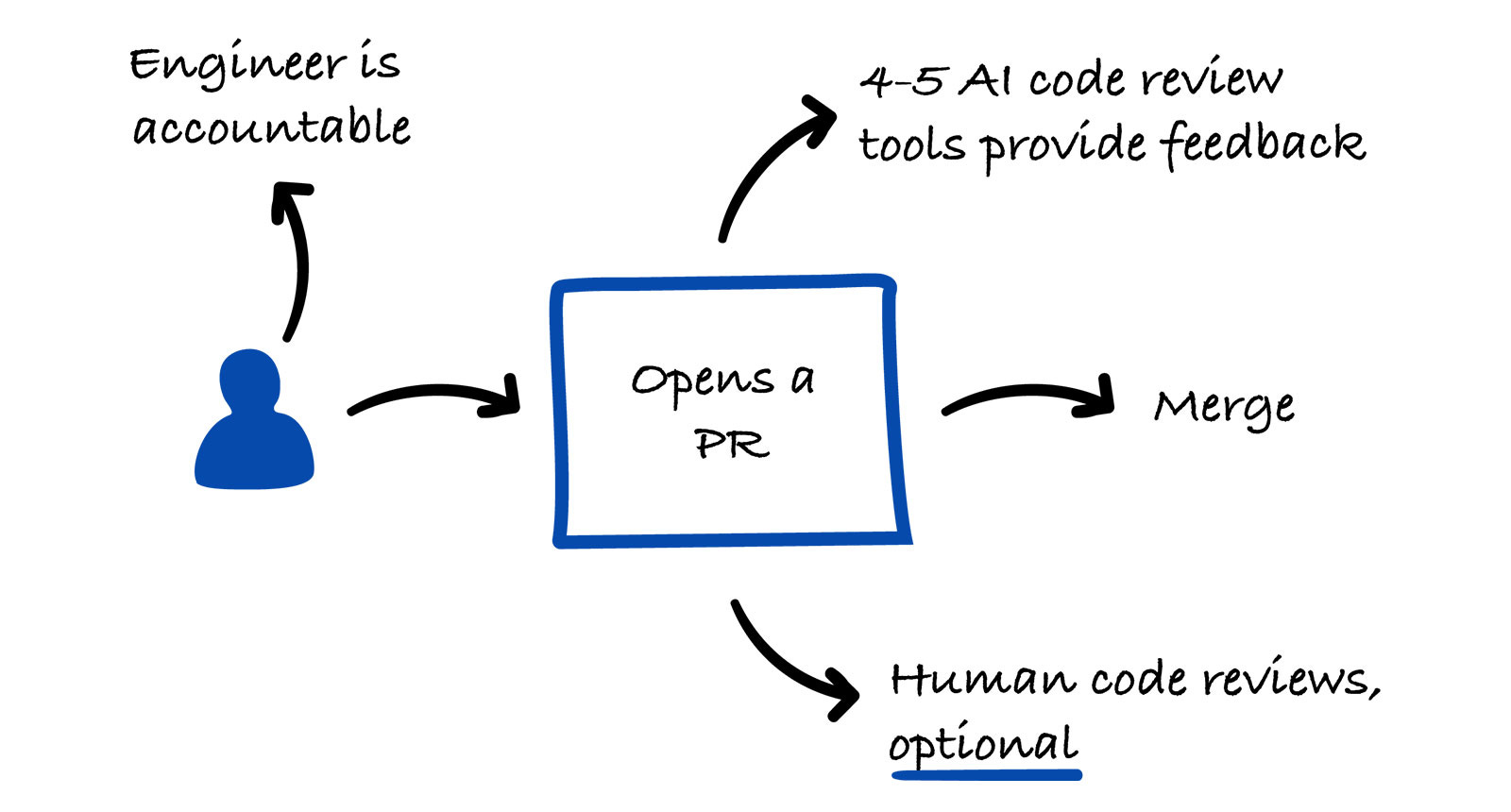

Code review: humans optional, AI mandatory

This is one of the more striking parts of the piece. Human code review is not required. Every PR is reviewed by multiple AI tools running in parallel:

The logic: running multiple reviewers in parallel raises the odds of catching real issues. Even if just one of them flags something important, the cost of running them all is worth it. They plan to build their own internal version inspired by Continue's review tool.

They also continuously evaluate which reviewers are effective, and encode recurring issue patterns into their AI reviewers so feedback is automated as much as possible. Engineers can still ask a teammate to review when they want input — it's just not gated.

How new engineers are onboarded into this

The first few PRs from a new hire are reviewed closely, line by line to build alignment and ensure they understand the codebase. After a couple of PRs, reviews get lighter quickly and engineers operate independently soon after. The expectation is explicit: engineers own their code — they have to understand it and take responsibility for it.

The tradeoff is real: dropping mandatory human reviews increases speed but introduces some quality risk. Bugs do happen — but they're typically fixed within minutes, which preserves user trust. They're investing more in automated and AI-driven QA to reduce the risk further as the team grows.

Deep system understanding is non-negotiable

Even though they're heavily AI-native, Andrew is clear: deeply understanding the tech and the domain is essential. Weave draws a sharp line between two modes:

- "Vibe coding" — letting AI tools run free, blindly accepting outputs.

- AI-assisted engineering — engineers with strong system-level understanding using AI tools deliberately, guiding agents with intention, and using context and judgment to make sure solutions fit the broader system.

Without that system-level understanding, leaning on AI quickly produces messy, hard-to-maintain code. The skill is in coordinating AI agents, not surrendering to them.

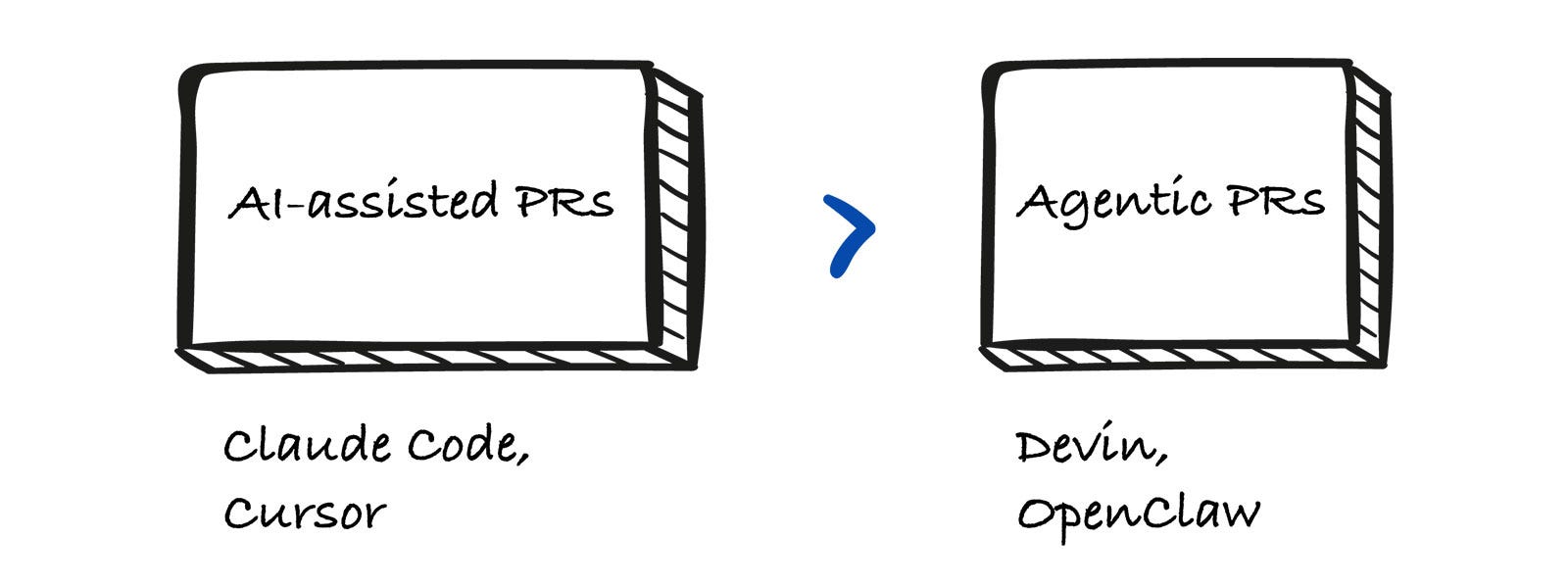

AI-assisted PRs, not agentic PRs

There's a distinction worth understanding:

- Agentic PRs — tools like Devin or OpenClaw can be assigned a GitHub user and open PRs autonomously based on a requirement. The human reviews the finished PR.

- AI-assisted PRs — the engineer drives, using Cursor or Claude Code, with AI doing most of the typing. The engineer opens the PR.

Weave prefers AI-assisted. Andrew said reviewing fully agentic PRs is draining — you have to closely audit a black box and then wait for fixes. With Cursor/Claude Code in the loop, the engineer builds context as the change is being made, so they understand and can verify it as it happens, not after the fact.

Production debugging — a great fit for AI

Debugging used to mean manually digging through logs, session recordings, and data sources. Now, tools like Claude Code can often identify the root cause in a single pass.

The key enabler is structured access to the right systems. They give AI:

- production logs,

- a read-only replica of the database,

- monitoring tools — they use SigNoz, an open-source alternative to Datadog,

- codebase context.

They take a "trust but verify" approach. AI usually lands the correct root cause; when it doesn't, reading its reasoning still shortens the human debug path significantly. Net effect: support rotations are much less time-consuming, and engineers on rotation can spend time on other work.

~75% of their code is AI-generated

Andrew literally pulled out his phone during the conversation: last week, 75% of their code was AI-generated. Manually typing code in the IDE is now the exception. (Andrew jokes he's probably the most likely to fall back to manual typing — out of habit.)

Other places AI shows up

- Planning and research. Before a new project, they run deep research to compare approaches and existing solutions. Example: while training a recent model, they spent meaningful time researching different reinforcement learning methods to figure out what would (or wouldn't) work for their case.

- Recruiting. Most steps are automated or AI-assisted. Outbound sourcing is handled by agents. Take-home assignments are graded by AI — and accurately enough that they stopped double-checking manually. Candidates are allowed to use AI on take-homes since that mirrors real work; the only AI-free step is a problem-solving technical interview.

- GTM internal tools. The GTM team builds its own automation — for example, a LinkedIn messaging flow where prospect replies land in Slack with AI-generated reply suggestions that the team can quickly review and edit. Things like this get built and shipped quickly by people outside engineering, which would have been much harder pre-AI.

Where AI is not working well for them

A useful, honest list:

- Building user interfaces. AI defaults to patterns from its training data, which often don't match Weave's product style or quality bar. Left unguided, the UI feels slightly off. They actively guide the output to match their design taste and keep visual consistency.

- Bigger architectural decisions. AI struggles when the required context can't fit into a single context window. Architecture needs system-wide understanding, long-term thinking, and judgment — exactly where humans add the most value. They believe closing this gap would unlock a lot more from AI agents.

- Estimations. A funny one: AI is terrible at estimating effort. Because it's trained on human data, it gives traditional human-scale timelines — phases that "sound like weeks" — when the same work might actually take it minutes. That mismatch makes AI's own estimates basically useless.

Takeaway

What stood out most: everything at Weave is intentional. They aren't using AI because it's hot — they're rebuilding how an engineering team operates around it. Speed is prioritized, but never at the cost of understanding. Engineers have high autonomy, but it's paired with strong ownership. AI does a large share of the work, but humans are firmly in the driver's seat for judgment, context, and direction.

Author

Gregor Ojstersek

Continued reading

Keep your momentum

MKT1 Newsletter

100 B2B Startups, 100+ Stats, and 14 Graphs on Web, Social, and Content

This is Part 2 of MKT1's three-part State of B2B Marketing Report. Where Part 1 looked at teams and leadership , Part 2 turns to what marketing teams are actually doing — what their websites look like, how they use social, and what "content fuel" they're producing. Emily Kramer u

Apr 28 · 10m

Lenny's Newsletter (Lenny's Podcast)

Why Half of Product Managers Are in Trouble — Nikhyl Singhal on the AI Reinvention Threshold

Nikhyl Singhal is a serial founder and a former senior product executive at Meta, Google, and Credit Karma . Today he runs The Skip ( skip.show (https://skip.show)), a community for senior product leaders, plus offshoots like Skip Community , Skip Coach , and Skip.help . Lenny de

Apr 27 · 7m

The AI Corner

The AI Agent That Thinks Like Jensen Huang, Elon Musk, and Dario Amodei

Dominguez opens with a claim that is easy to skim past but worth stopping on: the difference between elite founders and everyone else is not raw IQ or speed — it is that each of them has internalized a repeatable mental procedure they run on every important decision. The procedur

Apr 27 · 6m